Imagine reading a sentence where all the words are scrambled. You likely wouldn't understand what it says. Yet, computers don't naturally "know" the order of words the way we do. In fact, standard computer algorithms process information in ways that ignore sequence entirely unless explicitly told otherwise. This creates a huge problem for Artificial Intelligence trying to understand human language. If you tell a machine that "The dog bit the man" and "The man bit the dog," a basic system sees the exact same bag of words. The meaning flips completely based on order, but without a specific instruction on sequencing, the math stays identical.

The Permutation Problem

This issue is known as permutation invariance. When Google Brain researchers introduced the Transformer architecture back in 2017, they wanted to speed up processing. Older methods, like Recurrent Neural Networks (RNNs), read text one word at a time, naturally remembering the order because of their sequential nature. Transformers changed the game by processing every token in a sentence simultaneously to save time. While this made training much faster, it stripped away any inherent sense of position. Without help, the model treats the first word exactly the same as the last word. To fix this, engineers had to inject positional information directly into the math.

This injection is called Positional Encoding, which adds specific data vectors to each word to indicate its place in the sentence. Think of it like adding street addresses to mail parcels so a sorting machine knows which house gets which letter. Without these coordinates, the mail ends up in the wrong neighborhoods. Similarly, without positional data, a Large Language Model cannot distinguish between "The cat sat on the mat" and "On the mat, the cat sat." Even though the words are identical, the meaning changes based on the arrangement.

Absolute versus Relative Positions

When developers first started building these systems, they chose the most straightforward method: Absolute Position Embeddings (APEs). This assigns a fixed number to every spot in a sequence. The first word always gets vector #1, the second gets vector #2, and so on. It sounds logical, and it works reasonably well for shorter sentences. However, research has uncovered significant cracks in this foundation. A major study released by Meta AI Research in December 2022 exposed a critical weakness. They found that models trained with absolute positions become over-reliant on them.

If you shift the starting position of a sentence-say, pretending the text begins at position 100 instead of 0-the model's performance tanks. The study showed an average accuracy drop of 23.7% across various tasks when subjected to these position shifts. Essentially, the AI stops looking at the relationships between words and starts obsessing over their absolute location indices. This makes the model brittle and unable to generalize when the structure changes slightly.

| Method | Description | Key Limitation | Performance Impact |

|---|---|---|---|

| Absolute Position Embeddings | Assigns fixed vectors to each index | Poor extrapolation beyond training length | 23.7% accuracy drop on shifted inputs |

| Relative Position Encoding | Encodes distance between tokens | Higher computational overhead (2.3x) | 1.8 BLEU score improvement |

| Rotary Position Embedding (RoPE) | Applies rotation matrices to vectors | Fixed mathematical rotation regardless of context | 4.7% perplexity increase on long sequences |

The Rise of Rotary Position Embedding

To solve the brittleness of absolute embeddings, the industry shifted toward relative concepts, particularly Rotary Position Embedding, commonly known as RoPE. Introduced later, RoPE became the default for powerful modern models like LLaMA and GPT-4. Instead of tagging a word with an absolute coordinate, RoPE applies a rotation matrix to the query and key vectors based on their relative distance.

This approach allows the attention mechanism to calculate relationships dynamically. If two words are four positions apart, they receive a specific mathematical treatment that highlights their proximity. This helps the model handle context better than absolute methods. Benchmarks show that LLaMA-2, which uses RoPE, experiences only a 4.7% increase in perplexity when processing sequences twice as long as its training length. In contrast, models using absolute embeddings saw a massive 21.3% degradation under the same conditions. This superior length extrapolation makes RoPE the industry standard by late 2025.

However, even RoPE isn't perfect. Researchers from MIT highlighted a subtle flaw in December 2025. Because RoPE relies on fixed mathematical rotations, words separated by the same distance always get the same rotational angle. It doesn't matter what the words are; the math remains identical for a distance of five tokens. This creates issues in languages with flexible word orders, like Latin or Japanese, where grammar rules aren't tied strictly to linear proximity. The 2025 study noted a 12.8% higher error rate when processing Latin text compared to English, suggesting that rigid distance-based encoding limits linguistic nuance.

Understanding Position Generalization

Despite these limitations, Large Language Models have shown surprising robustness. A concept known as "position generalization" emerged from studies in early 2025. Researchers discovered that transposing up to 5% of word positions in input text causes only marginal increases in perplexity-roughly 1.8% to 3.2% on average. GPT-4, for instance, showed just a 1.9% performance degradation on GLUE benchmark tasks even when the word order was significantly shuffled.

This suggests that while positional encodings are vital, the models also learn semantic patterns that partially compensate for order confusion. The weight sum operation in attention heads doesn't perfectly eliminate positional distinctions, but it also doesn't rely on them exclusively. Some experts argue this means future models might not need such rigid encoding schemes. However, other analyses suggest that current successes might be masking underlying fragility that appears when handling complex reasoning tasks requiring strict structural adherence.

Future Directions: Positional Memory

The field is moving rapidly toward hybrid approaches. David Bau from MIT challenged conventional wisdom by proposing "positional memory." Instead of just tracking static distances or absolute spots, this new method aims to model how meaning changes along the path between words. It captures cumulative effects along token paths rather than just immediate proximity.

This innovation addresses the "fixed rotation" problem identified in earlier RoPE implementations. By incorporating contextual awareness into positional signals, models can better handle multilingual contexts where word order flexibility varies. Industry analysts predict that by 2027, roughly 65% of enterprise LLM deployments will use hybrid methods combining RoPE with context-aware memory. This marks a significant shift from the 12% adoption rate seen in 2025.

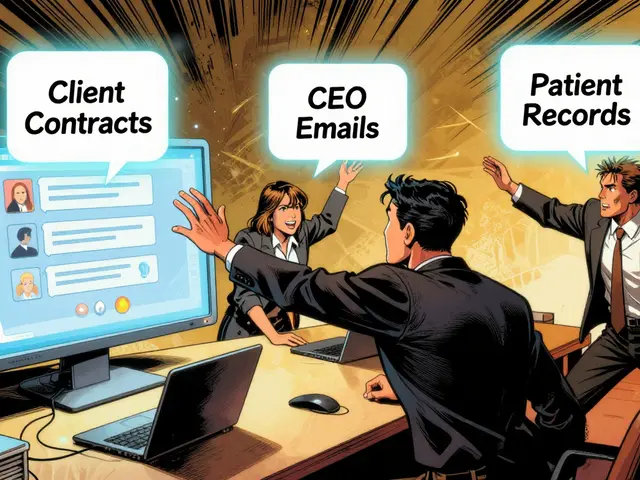

Practically speaking, developers need to balance semantic and positional weighting. For standard language modeling, the optimal split is about 67% semantic and 33% positional information. But for structured reasoning tasks-like coding or math-the scale tips to favor positional data, needing around 58% to 62% weighting for the best results. Getting this ratio wrong can lead to "attention collapse," especially in long sequences exceeding 4096 tokens, where positional signals might overwhelm the actual content.

Why do Transformers need positional embeddings?

Transformers process all tokens in parallel rather than sequentially. Unlike RNNs, they lack a built-in sense of order. Positional embeddings inject coordinates to inform the model where each word sits in the sequence, allowing it to distinguish between sentences with identical words but different meanings.

What is the main drawback of Absolute Position Embeddings?

They cause models to over-rely on fixed indices. Meta AI research showed that shifting sentence start positions leads to a 23.7% accuracy drop. They also struggle to generalize beyond their original training sequence lengths.

How does RoPE improve upon previous methods?

RoPE uses rotation matrices based on relative distance between tokens. This allows for better extrapolation to longer sequences. LLaMA-2 showed only a 4.7% perplexity increase on double-length sequences compared to 21.3% for absolute methods.

Can LLMs read text with scrambled words?

Yes, to an extent. Studies indicate that transposing up to 5% of word positions only marginally affects perplexity (around 1.8-3.2%). This is due to "position generalization," where semantic learning compensates for minor order errors.

What is the future of positional encoding?

The trend is toward hybrid methods. Experts predict 65% of enterprise deployments will combine RoPE with context-aware "positional memory" by 2027 to better handle multilingual nuances and reduce rigidity in distance calculations.

5 Comments

Stephanie Serblowski

Honestly the shift from absolute to rotary encoding makes sense from a scaling perspective.

The extrapolation benefits alone justify moving away from index-based lookups.

But let's be real here the math gets kinda messy fast.

When you look at the BLEU score improvements in the table its barely noticeable for short contexts.

People panic over the 23.7% drop metric without considering the base case scenarios.

Sure the positional memory concept sounds cool but implementation is tricky.

I'm guessing most devs will just copy-paste the existing libraries until it breaks.

We always wait for the enterprise adoption to force standards into place.

Maybe in 2027 everyone will finally agree on the optimal weighting split.

Until then lets enjoy watching the perplexity curves go up and down.

🙄 It will probably work out fine eventually.

Just dont expect perfect semantic alignment anytime soon though.

Renea Maxima

I suppose the universe is just a big transformer architecture waiting to be decoded 🤔.

Sagar Malik

The mathematical underpinings of rotory embeddings are clearly designed to obfuscate the true intent behind sequence processing.

People ignore the subtle signal leakage when tokens rotate through complex space.

It feels like the corporate giants are hiding something fundamental about the architecture.

We cannot trust the open weights released by these labs without futher audit.

The permutation invariant nature suggests they want us to lose track of order entirely.

The introduction of rigid systems implies a desire to obscure true sequence handling.

The researchers claim efficency but the computational overhead tells a different story.

Absolute positions were too transparent for their purposes.

Now we rely on angles that shift based on hidden paramaters nobody sees.

It creates a dependecy that forces us to download newer updates constantly.

If the rotation changes then the whole meaning shifts unexpectedly.

I notice this pattern in every major release cycle recently.

They want standardisation across all global language models simultanesoulsy.

Control over infrastrucutre determines who benefits from standardised language outputs.

Our privacy is sacraficed for marginally better perplexity scores on benchmarks.

We need independenent verificatin of the rotation matrices before deployement.

Transparency is key for actual scintific progress in this domain.

Until then we must remain sceptical of official documetnation claims.

Jeremy Chick

Stop spinning these wild stories about goverment tracking through embeddings.

You sound like a conspiracy nut who has read too much forum gossip online.

The paper from Meta was solid and explained the brittleness issues clearly.

RoPE is better than what we had before period.

Stop trying to find shadows where there is none.

Focus on the actual code instead of making stuff up.

Seraphina Nero

Jeremy does have a point about sticking to the facts.

Sometimes simple explanations help people understand the tech better.

Positional encodings are just helpers for the computer really.

They make sure words stay in the right order mostly.

No need to worry about secret codes or hidden meanings inside.

Most of us just want to know if the model works good enough.

Keepping things simple helps the community learn faster too.

We should all focus on building useful tools together.