Imagine asking an intelligent assistant for a legal citation, only to receive a perfectly formatted law that never existed. This scenario isn't science fiction; it is the daily reality for many teams deploying Generative AI is artificial intelligence technology designed to create content such as text, code, and images. By March 2026, while these systems have become powerful, the issue of factual errors remains a significant hurdle. These errors, known as hallucinations, occur when the system confidently presents false information as truth. Understanding how to fix this isn't just about tweaking settings; it requires a fundamental shift in how we build and evaluate these models.

Understanding the Nature of Fabricated Facts

You need to grasp why these mistakes happen before you can stop them. Unlike traditional software bugs that produce predictable failures, AI hallucinations stem from the very way these models are built. They function as probabilistic prediction engines. When you ask a question, the model doesn't look up a fact in a database. Instead, it calculates which word is statistically likely to come next based on patterns learned during training.

This approach creates a tension between creativity and accuracy. The model prioritizes fluency and plausibility over strict truth. Sometimes, to complete a sentence smoothly, it invents details that fit the narrative pattern even if those details are false. This happens frequently with Large Language Models(LLMs) are advanced AI systems trained on vast amounts of text data. Because they predict token-by-token, a small error early in a response can cascade, leading to increasingly confident misinformation as the conversation grows longer.

Core Drivers of Inaccurate Outputs

Several underlying factors push these systems toward fabrication. First, consider the training data itself. If the source material contains bias, outdated information, or deliberate falsehoods, the model learns these patterns as normal. During pre-training, the system ingests massive volumes of text from the open web. Without rigorous screening, it absorbs myths alongside facts.

Second, architectural limitations play a huge role. The context window-the amount of information the model can remember in a single session-has limits. When a query requires knowledge outside this window, the model struggles. It might try to fill the gap with assumptions rather than admitting ignorance. Additionally, evaluation metrics often reward confidence. If a system is graded only on whether it answers a question, it learns that guessing is better than saying "I don't know." This structural incentive actively encourages hallucinations.

Practical Mitigation Strategies for Teams

So, how do you actually reduce this risk in your projects? There is no magic switch, but combining several proven techniques yields the best results. You should treat the following methods as a layered defense system.

Retrieval-Augmented Generation

One of the most effective defenses is grounding the model in verified knowledge. This technique, widely adopted in 2025 and matured further by now, involves retrieving relevant documents before generating an answer. Instead of relying solely on internal memory, the system searches an external knowledge base for supporting evidence. It then cites this evidence in its response.

This method forces the model to stick to provided facts. If the retrieval step finds no match, the system can be programmed to refuse answering rather than guessing. This dramatically reduces fabrication rates because the model cannot access unverified patterns from its weights during the generation phase.

Reinforcement Learning from Human Feedback

Another critical layer involves post-training adjustments. After the initial learning phase, engineers use human feedback to fine-tune behavior. Humans rate different outputs for accuracy and helpfulness. The model adjusts its internal parameters to favor responses that humans flagged as truthful. This aligns the AI's goals with real-world expectations of honesty.

However, this process requires high-quality human oversight. If the reviewers themselves are biased or inconsistent, the model may adopt those flaws. Organizations must invest in diverse review teams to ensure the feedback loop reinforces factual correctness across different domains.

Prompt Engineering Techniques

You can also influence behavior through how you ask questions. Clear, specific prompts reduce ambiguity. Vague requests invite the model to fill gaps with invented details. Using chain-of-thought reasoning, where you ask the model to show its work step-by-step, can expose logic gaps before a final answer is formed.

For example, instead of asking "Summarize this report," try "Extract key findings supported by the text below." Explicitly instructing the model to cite sources or indicate uncertainty changes the probability distribution of the output tokens. It pushes the system away from confident speculation.

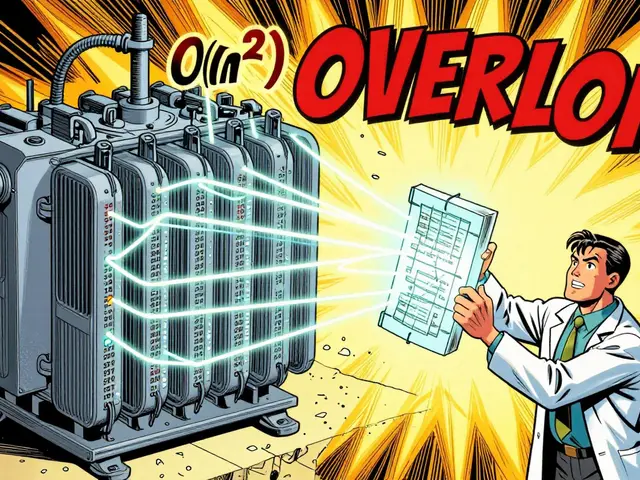

| Strategy | Effectiveness | Implementation Cost | Risk Reduction Level |

|---|---|---|---|

| Retrieval-Augmented Generation | High | Medium | Significant |

| Human Feedback Tuning | Medium | High | Moderate |

| Prompt Engineering | Low-Medium | Low | Volatile |

| Confidence Scoring | Medium | Medium | High |

Monitoring and Continuous Evaluation

Implementing strategies is only half the battle; you must monitor performance continuously. Static testing isn't enough because model behavior can drift over time. Establish automated pipelines that flag responses containing unverified claims. Tools that detect confidence scores allow you to filter out low-certainty answers before users see them.

Human review remains essential for high-stakes fields like healthcare or finance. Even with advanced safeguards, edge cases slip through. A hybrid workflow where the AI drafts and a human verifies ensures safety without sacrificing speed entirely. Regular audits help identify recurring patterns of error, allowing you to update training data or fine-tune parameters proactively.

Future Directions in Reliability

Looking ahead past the current date, research focuses on better uncertainty estimation. Ideally, models should be able to communicate their confidence levels explicitly. Imagine seeing a percentage score next to every claim. While this technology exists in prototypes, widespread commercial integration is still evolving. Another promising avenue is constitutional AI, where rules govern behavior similarly to a code of conduct embedded directly into the model's objective function.

The industry is moving toward a standard where transparency reports detail accuracy metrics alongside performance benchmarks. Just as safety ratings matter for cars, reliability scores will matter for enterprise adoption of Generative AI. As 2026 progresses, expect more regulatory frameworks requiring proof of verifiability for public-facing systems.

Can hallucinations ever be completely eliminated?

Current research suggests total elimination is unlikely due to the probabilistic nature of neural networks. However, reducing frequency to negligible levels in controlled environments is achievable through RAG and strict validation protocols.

What is the most cost-effective mitigation method?

Prompt engineering offers the lowest barrier to entry, but Retrieval-Augmented Generation provides the highest return on investment for reducing errors in production environments.

Does larger model size prevent errors?

Not necessarily. Larger models often exhibit higher fluency, which can make hallucinations sound more convincing. Size correlates with capability but does not guarantee factual accuracy without grounding techniques.

How do I test my own models for hallucinations?

Use gold-standard datasets with known ground truth. Run comparative tests measuring factual accuracy against reference answers rather than just stylistic quality.

Are there regulations regarding AI truthfulness in 2026?

Yes, emerging global standards require disclosure of uncertainty levels and mechanisms for users to verify generated content, especially in regulated industries.

Dealing with these challenges requires patience and a multi-layered approach. Trust but verify should remain the guiding principle for any organization integrating these powerful tools.

5 Comments

Ajit Kumar

The fundamental issue discussed here is not merely technical but profoundly moral. We are essentially training the next generation on systematic falsehoods. When a machine lies confidently, it fundamentally undermines the concept of truth itself. People will stop distinguishing between fact and fiction eventually. This societal shift is terrifying to consider carefully. Corporations prioritize profit over honesty consistently. They do not care about the integrity of human knowledge anymore. We must demand stricter ethical guidelines immediately. The suggested RAG techniques are merely band-aids on a gaping wound. They do not address the core corruption of the system design. True alignment requires human values embedded deeply. Without this, we remain slaves to probabilistic guesswork. History shows us that unchecked power corrupts absolutely. AI is now holding a significant amount of power. Therefore we must regulate it severely before it is too late. Silence on this front is complicity.

Priti Yadav

They are trying to distract you with mitigation strategies while the real control layer gets installed silently. Notice how they focus on retrieval when the real issue is the weights themselves. Something fishy is definitely going on in the backend systems. We need to watch the regulatory frameworks more closely because they are clearly written by the people profiting. Trust is the last thing we should have in this scenario. Also the punctuation in the second paragraph was sloppy and unprofessional.

Geet Ramchandani

Most developers don't even understand why models fail fundamentally. They just slap RAG onto broken pipelines and call it innovation. The metrics cited are completely misleading and outdated. Companies will keep failing until someone admits the model architecture is flawed. Nobody cares about your cost table. Real world deployment crashes daily because of these exact assumptions. Stop selling magic tricks as enterprise solutions. The industry is built on a foundation of sand. Everything is broken. It will never work without total overhaul.

Pooja Kalra

The pursuit of objective truth in a probabilistic universe is ultimately futile.

Sumit SM

You hit the nail right on the head, seriously! It is truly frightening how fast things change! We need action! The moral compass must stay steady! But the technology moves so fast! We cannot keep up easily with the pace! It requires collective awareness! Everyone needs to wake up!