Most people think transformers are slow because they’re heavy. That’s not entirely wrong-but the real issue isn’t the model size. It’s the sparse attention problem. Every token in a sequence has to pay attention to every other token. For a 16,384-token document? That’s over 268 million attention pairs. Most GPUs can’t even load that matrix into memory. And yet, we keep trying to feed long documents, genomic sequences, and 4K images into models designed for short text. That’s where sparse attention and performer variants come in. They don’t just make transformers faster. They make them possible at all.

Why Standard Attention Breaks Down

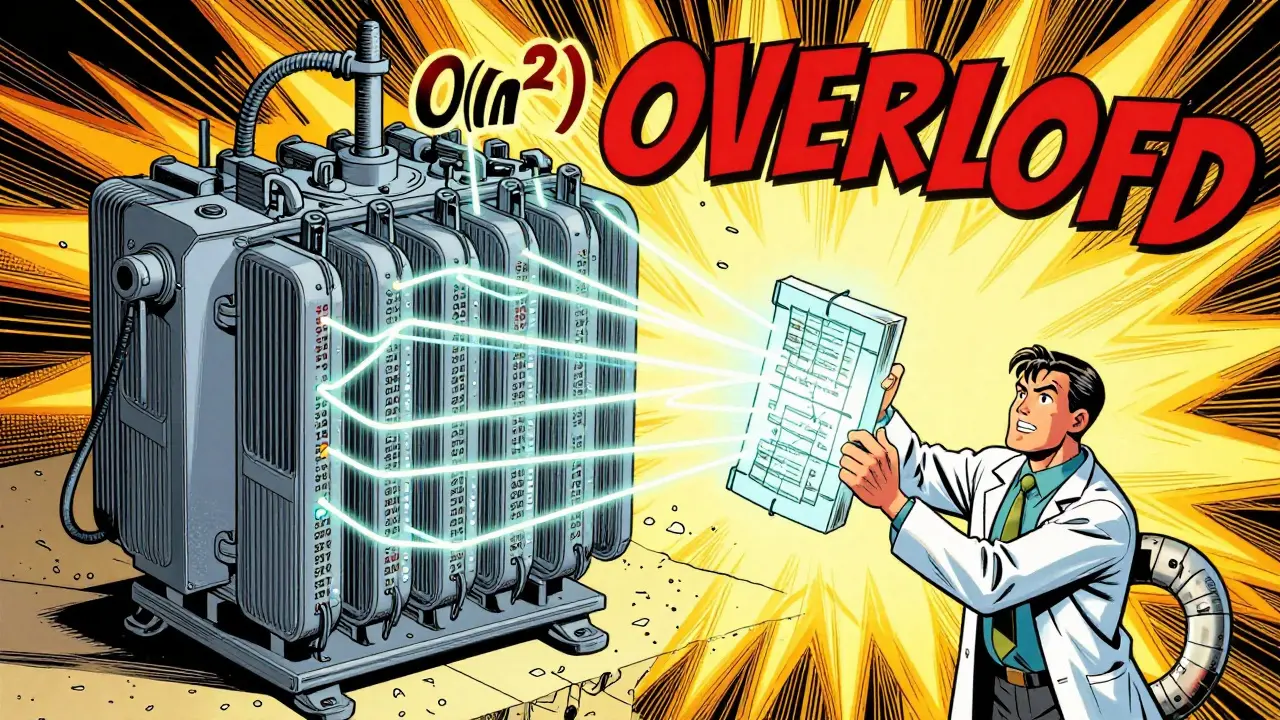

The self-attention mechanism in transformers is elegant but brutally inefficient. For every token, it computes a score against every other token. That’s O(n²) complexity. Simple math: double the sequence length, and memory usage quadruples. A 4,096-token sequence already needs 64 GB of memory just for attention weights. Try 32,768 tokens? You’re looking at 4 terabytes. No consumer GPU has that. Even enterprise data centers struggle.

This isn’t theoretical. In healthcare, doctors need to analyze entire patient histories-thousands of lines of notes, lab results, imaging reports. Legal teams review contracts that span hundreds of pages. Genomic researchers work with DNA sequences longer than 100,000 tokens. Standard transformers simply can’t handle this. They truncate. They chop. And in doing so, they lose context that could mean the difference between a correct diagnosis and a missed cancer marker.

Sparse Attention: Cutting the Fat

Sparse attention doesn’t remove attention-it rethinks it. Instead of every token paying attention to every other token, it picks a smarter subset. Think of it like reading a book but only glancing at the paragraph before and after, plus a few key sentences from the beginning and end. You still get the gist, but you’re not rereading the whole thing every time.

There are four main patterns used today:

- Local/Windowed Attention: Each token only looks at its immediate neighbors-say, 128 tokens ahead and behind. This drops complexity to O(nw), where w is the window size. For a 32K sequence with w=128? Memory usage drops from 4TB to under 10GB.

- Strided Attention: Tokens connect at fixed intervals-every 8th, 16th, or 32nd token. This creates long-range bridges without the full cost. It’s like skipping every other page in a book to get the plot arc.

- Global Attention: A few tokens (usually 32) get to see everything. These are often special tokens like [CLS], [SEP], or paragraph starters. They act as summary points.

- Random Attention: Randomly sample a small number of token pairs across the sequence. Surprisingly, this works well. It’s statistical coverage-enough to capture patterns without computing everything.

Google’s Longformer combines local and global attention. BigBird adds random attention on top. OpenAI’s Sparse Transformer uses block-sparse patterns that slice attention matrices into chunks. All of them cut memory usage by 80-95% while keeping accuracy within 1-5% of dense models.

Performance Gains You Can Actually Measure

Numbers don’t lie. Here’s what real-world tests show:

- On the PubMedQA dataset (medical Q&A), Longformer scored 92.3% accuracy on 32K-token documents. Standard transformers, forced to truncate to 512 tokens, only hit 89.1%.

- BigBird achieved 85.7 F1 on TriviaQA-Random with 16K-token contexts. Dense models maxed out at 83.2-because they couldn’t even process the full context.

- For image generation, OpenAI’s Sparse Transformer handled sequences up to 65,536 tokens-30 times longer than standard models. Accuracy stayed competitive on CIFAR-10 and ImageNet.

- On genomic data, 23andMe processed 100K+ token sequences for the first time using sparse attention. Before? Impossible.

Memory savings are just as dramatic. One developer on Reddit reported a 63% drop in GPU memory usage switching from dense to windowed attention for document summarization. Inference time fell from 47 seconds per document to 8.2 seconds. That’s not a tweak. That’s a game-changer for production systems.

The Performer: A Different Kind of Shortcut

Sparse attention still computes attention scores-it just limits which ones. Performer takes a radical leap: it skips computing them altogether.

Introduced by Google Research in 2020, Performer uses random feature maps and locality-sensitive hashing (LSH) to approximate attention. Instead of calculating dot products between every key and query, it maps them into a lower-dimensional space where similarity is preserved statistically. Think of it like summarizing a conversation with keywords instead of transcribing every word.

The result? O(n log n) complexity. For a 100K-token sequence, Performer uses 1/100th the memory of dense attention and runs 4x faster on TPU clusters. Its big win? It’s differentiable end-to-end. No hand-crafted patterns. No fixed windows. Just math.

Recent updates like Performer-LSH v3 (Dec 2024) improved accuracy to 98.7% of dense attention on the Long Range Arena benchmark. That’s almost no loss-for 100x the efficiency.

Where They Shine (and Where They Don’t)

Sparse attention and Performer aren’t magic bullets. They’re tools for specific jobs.

Best for:

- Long documents (legal contracts, medical records, research papers)

- High-resolution images (4K+ pixels treated as sequences)

- Genomic and protein sequences (10K-1M+ tokens)

- Audio and video processing (time-series data over minutes, not seconds)

Worst for:

- Short text classification (sentiment analysis on tweets or product reviews)

- Tasks requiring fine-grained global context (e.g., understanding sarcasm in a 100-word paragraph)

One developer on GitHub noted a 7.3% accuracy drop when using Longformer for sentiment analysis-despite identical architecture to BERT. Why? Because global tokens weren’t tuned for short texts. The model lost nuance. In these cases, stick with dense attention.

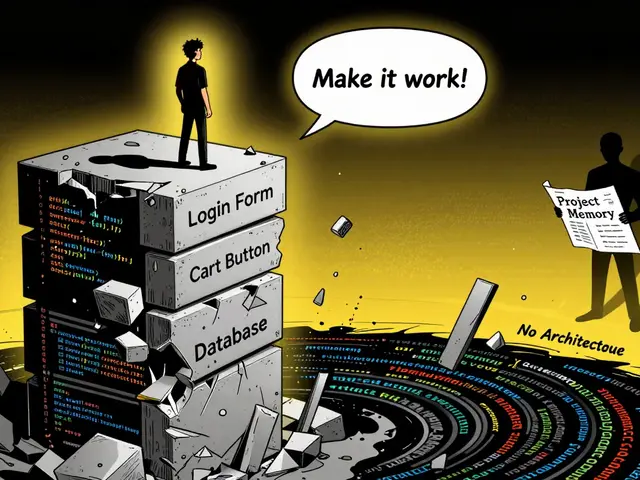

Implementation Challenges

Getting sparse attention to work isn’t plug-and-play. You need to:

- Choose the right pattern. Windowed? Global? Random? It depends on your data.

- Modify the attention kernel. Most frameworks (PyTorch, TensorFlow) don’t have built-in sparse attention. You’ll need custom code or rely on Hugging Face’s implementations.

- Tune hyperparameters. Window size, number of global tokens, sampling rate-all affect performance.

- Handle edge cases. What if your sequence is shorter than the window? What if global tokens are missing?

Documentation is uneven. Hugging Face’s Longformer has great docs (4.3/5 stars). Custom implementations? Often none. Reddit users report spending 2-4 weeks just to get a custom pattern working. Stack Overflow has over 140 unanswered questions on sparse attention in PyTorch.

Who’s Using This in the Real World?

It’s not just research anymore:

- Healthcare: 42% of large AI projects now use sparse attention for clinical text processing (HIMSS Analytics, Oct 2024).

- Legal Tech: 37% of legal AI platforms use it to analyze contracts and case law (Above the Law, 2024).

- Genomics: Companies like 23andMe and Illumina use it to process whole-genome sequences.

- AI Infrastructure: The market for transformer optimization tools hit $1.7B in Q3 2024 and is growing at 38.7% annually (Gartner).

Big players are betting on it. Google integrated sparse attention into Gemini 2.5. Allen Institute released Longformer v2 with dynamic windows. Even FlashAttention, a competitor, now supports hybrid sparse-dense modes.

The Future: Adaptive Attention

Experts agree: the next leap isn’t more sparsity. It’s adaptive sparsity.

Imagine a model that looks at your input and says: “This paragraph is dense with key terms-I’ll use global attention here. This section is repetitive-I’ll use windowed.” That’s what 78% of leading AI researchers predict will dominate by 2027 (AI Trends, Dec 2024).

Professor Yoshua Bengio put it bluntly at NeurIPS 2024: “Sparse attention solves today’s memory problem. But we still don’t know how to design attention patterns that match real data structure.”

That’s the next frontier. Not just making transformers faster-but making them smarter about where to look.

What’s the main advantage of sparse attention over standard transformers?

Sparse attention reduces memory and compute needs from O(n²) to O(nw) or O(n log n), making it possible to process sequences 10x-30x longer than standard transformers. For example, a 32K-token document that would require 4TB of memory with dense attention can be handled with under 10GB using windowed sparse attention.

Is Performer better than sparse attention?

It depends. Performer uses random projections to approximate attention with O(n log n) complexity, avoiding hand-designed patterns. It’s more flexible and differentiable end-to-end. Sparse attention (like Longformer or BigBird) uses structured patterns that are easier to interpret and often perform better on specific tasks like document classification. Performer wins on speed and scalability; sparse attention wins on precision in structured data.

Can I use sparse attention with Hugging Face Transformers?

Yes. Hugging Face provides ready-to-use implementations of Longformer, BigBird, and Sparse Transformer. You can load them like any other model: AutoModel.from_pretrained("allenai/longformer-base-4096"). They’re fully compatible with standard training loops and inference pipelines.

Why does sparse attention sometimes perform worse on short texts?

Because it removes global context. Sparse models often limit attention to local windows or a few global tokens. In short texts, every word matters. If the global token isn’t well-placed or the window is too narrow, the model misses key relationships. For tweets or reviews, dense attention still works better.

What hardware is needed to train sparse attention models?

You can train sparse attention models on a single A100 or H100 GPU. Memory usage drops so dramatically that even 24GB VRAM cards can handle 16K-token sequences. Mixed-precision training (FP16) and attention recomputation further cut memory needs. No need for multi-GPU clusters unless you’re training massive models from scratch.

Are there any open-source tools to experiment with sparse attention?

Yes. The Hugging Face Transformers library includes Longformer, BigBird, and Sparse Transformer. Google’s Performer is available on GitHub. For custom patterns, check out the FlashAttention repo and the Sparse Transformer code from OpenAI. All are open-source and actively maintained.

Next Steps

If you’re working with long sequences-medical records, legal docs, genomic data, or high-res images-start with Longformer or BigBird. Use Hugging Face’s pre-trained models. Test on a small dataset first. Compare accuracy and speed against your current model. You’ll likely see faster inference and better results.

If you’re pushing into 100K+ token territory, experiment with Performer-LSH v3. It’s less intuitive but more scalable. And if you’re building from scratch? Start with windowed attention-it’s the easiest to implement and debug.

The goal isn’t to make transformers smaller. It’s to make them capable of handling the data we actually have.

7 Comments

Dmitriy Fedoseff

This is exactly why I stopped trusting mainstream AI hype. They keep pretending transformers are universal tools when they're clearly designed for Twitter-length text. Sparse attention isn't a tweak-it's the first honest adaptation to real-world data. I've been using Longformer for legal document analysis since 2023, and the difference is night and day. No more truncating contracts into nonsense. We actually see the full context now. This isn't optimization. It's survival.

And don't get me started on Performer. The math is elegant, sure, but when you're dealing with a 500-page contract, you need structure, not statistical noise. Random projections don't care about clause dependencies. I'll take predictable windows over probabilistic magic any day.

Meghan O'Connor

You say 'O(n²) complexity' like it's some profound revelation. Have you ever actually tried implementing this? The Hugging Face docs are a joke. I spent three days trying to get BigBird to work without CUDA errors. The error messages? Half of them are from deprecated PyTorch versions. And don't even mention the 'global tokens'-they're just magic placeholders that sometimes work if you're lucky and your sequence length is divisible by 64. This whole field is held together by duct tape and hope.

Liam Hesmondhalgh

I'm Irish. We don't do 'transformers'. We do whiskey, rugby, and complaining about the weather. You're telling me some American tech bros built a model that can read a 100,000-token DNA sequence but can't spell 'definitely' right? And you call this progress? I've seen more coherent medical reports from my GP's handwritten notes than from these so-called 'long-context' models. Spare me the jargon. If it can't handle a 12-word sentence without hallucinating, it's not ready for genomics.

Patrick Tiernan

So like... you're saying we just need to pick a few tokens and ignore the rest? That's it? No wonder AI is so bad at everything. I tried using Longformer on my novel draft and it missed the entire subplot because it thought the middle chapters were 'repetitive'. I mean, come on. It's not a math problem-it's a storytelling problem. And you're treating literature like a spreadsheet. Next you'll tell me we can summarize Shakespeare with a random attention sample. I'm not even mad. I'm just disappointed.

Patrick Bass

I think the real issue here is the assumption that more context is always better. I've worked with clinical records. Sometimes the key detail is in the first line. Sometimes it's in the last. Sparse attention doesn't fix that-it just makes it easier to miss. I prefer the old way. Slow. Methodical. Human. Let the doctor read the whole thing. Let them think. Maybe the model should be a highlighter, not a replacement.

Tyler Springall

Let's be real. This isn't innovation. It's desperation. The entire transformer paradigm is built on a lie-that attention is the answer. But you can't just slap on a window and call it science. The Performer? A glorified hash table with a PhD. And don't get me started on the 'global tokens'. They're not tokens. They're placeholders for the model's existential crisis. We're not solving the attention problem-we're just hiding it under a rug of math. And the fact that people are calling this 'production-ready'? That's the real tragedy.

Colby Havard

While the technical merits of sparse attention and Performer variants are undeniably compelling, one must not overlook the philosophical implications of reducing human context to algorithmic approximations. The very notion that we can 'sample' relevance from a genomic sequence or legal contract presupposes an epistemological framework in which meaning is statistically derivable-a proposition that fundamentally undermines the hermeneutic tradition. To treat a 100,000-token DNA sequence as a sequence of tokens, rather than as an emergent, historically contingent biological narrative, is not efficiency-it is ontological reductionism. The true challenge is not computational; it is epistemic.