You’ve built a brilliant application that relies on an AI model to process data. You ask the model for a specific format-JSON-and you get... well, mostly JSON. But then, one user triggers a response with a missing comma, an unclosed bracket, or just plain text wrapped in markdown code blocks. Your parser crashes. The pipeline stops. It’s frustrating because the information is there; it’s just not usable by your software.

This is the classic "LLM output problem." Large Language Models are probabilistic engines designed to predict the next word, not to adhere to strict data structures. They don’t care about your database schema. To fix this, we move beyond simple instructions and use Schema-Constrained Prompts. This approach forces the model to generate valid, structured outputs that conform to predefined specifications before the text is even fully generated.

Schema-Constrained Generation is a technical method that restricts an LLM's token generation process to ensure the output strictly adheres to a defined JSON schema, preventing malformed data at the source rather than correcting it after generation.

Why Standard Prompting Fails for Structured Data

Most developers start with naive prompting. You tell the model: "Output the result as JSON." Sometimes it works. Often, it doesn’t. Even if you add "Do not include any other text," the model might still wrap the JSON in ````json` markers or hallucinate extra fields.

The core issue is that LLMs operate in token space, not structure space. When you ask for JSON, the model predicts tokens that *look* like JSON based on its training data. It doesn't inherently understand the logical constraints of a JSON object unless explicitly guided. Relying on post-generation parsing (trying to fix broken JSON after the fact) is inefficient and error-prone. If the JSON is invalid, `JSON.parse()` fails, and your application throws an exception.

Schema-constrained generation solves this by shifting the constraint from the *prompt* to the *decoding process*. Instead of hoping the model obeys rules, you mathematically restrict the tokens it can choose at each step.

The Mechanics: How Constraints Work Under the Hood

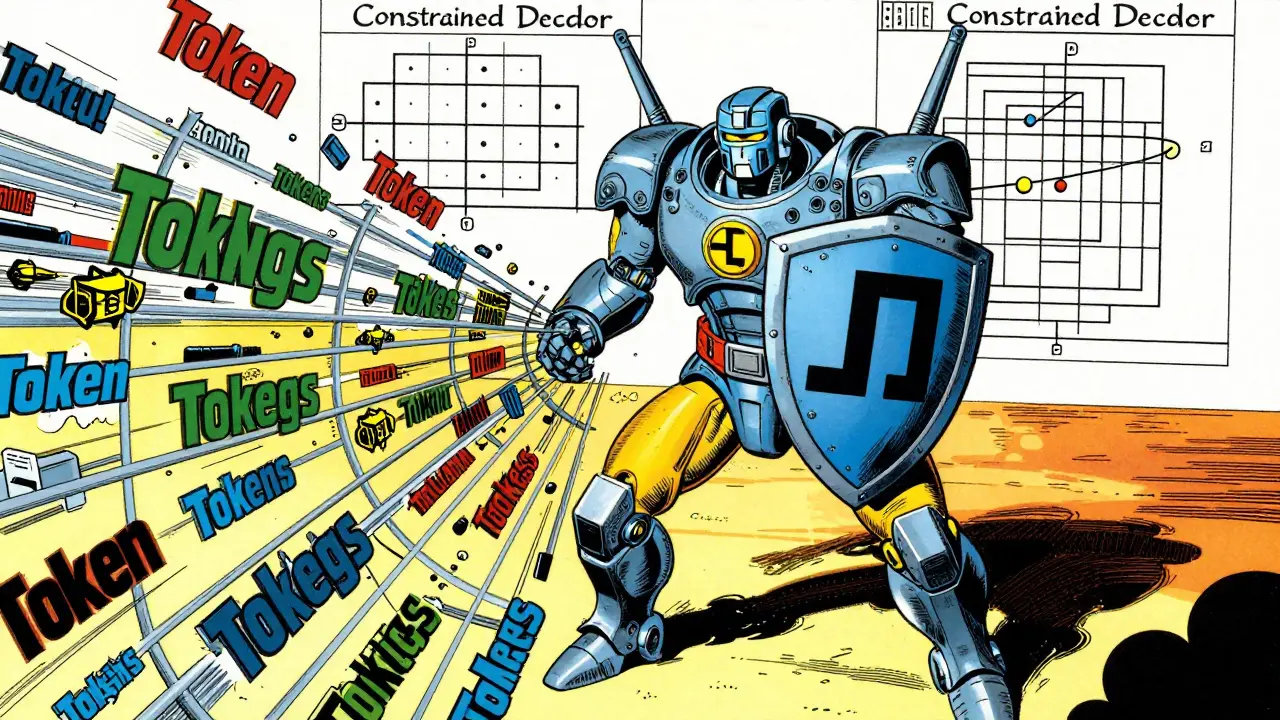

To force structured output, we use a technique called Constrained Decoding. Here’s how it works:

- Schema Definition: You define a JSON schema that outlines the exact structure you need. This includes required fields, data types (string, integer, boolean), nested objects, and arrays.

- Grammar Conversion: The system converts this JSON schema into a formal grammar or a Finite State Machine (FSM). Think of the FSM as a map where every state represents a specific point in the JSON structure (e.g., "expecting a key," "expecting a value," "expecting a closing brace").

- Token Filtering: As the model generates text, it produces probabilities for all possible next tokens. The constrained decoder looks at the current state of the FSM. It identifies which tokens are valid transitions from that state and masks out (sets probability to zero) all invalid tokens.

- Generation: The model selects from only the allowed tokens. This ensures that every character produced contributes to a valid JSON structure according to your schema.

This preventative approach eliminates the need for retry loops or complex error handling. The output is guaranteed to be syntactically correct JSON that matches your schema.

Implementing Schema Constraints: Tools and Libraries

You don’t need to build a Finite State Machine from scratch. Several libraries handle this complexity for you. For local LLM deployment, tools like the local-llm-function-calling library are popular. It provides a `JsonSchemaConstraint` class that accepts schemas similar to OpenAI’s specification.

Here’s what you can control:

- Data Types: Enforce strings, integers, floats, booleans, etc.

- Constraints: Set maximum lengths for strings, minimum/maximum values for numbers.

- Ordering: Use parameters like `enforceOrder` to ensure keys appear in a specific sequence.

- Nesting: Define complex hierarchical structures with nested objects and arrays.

Another useful tool is Datasette, which has an LLM plugin that accepts schema definitions via command-line options. It supports simplified schema notation, making it easier to define quick structures without writing full JSON Schema documents.

The Trade-Off: Reliability vs. Semantic Accuracy

There’s a catch. While schema constraints guarantee structural validity, they do not guarantee semantic correctness. A model can produce perfectly valid JSON that contains nonsensical data. For example, a schema might require an "age" field to be an integer. The constraint will ensure the output is a number, but it won’t stop the model from generating `-5` or `150` if the context suggests it.

Additionally, constrained decoding can impact performance. Smaller models (like GPT-2 or early versions of Llama) often struggle with complex schemas, producing lower-quality content or getting stuck in invalid states. Larger models generally handle constraints better, but you may notice a slight degradation in creative reasoning or nuance compared to unconstrained prompting. The model is being forced down a narrow path, which can limit its ability to explore alternative phrasings.

Also, consider token efficiency. JSON schemas are verbose. Including a detailed schema in your prompt consumes significant context window space, which can be expensive and slow down inference.

Comparison of Structured Output Techniques

Schema-constrained generation isn’t the only way to get structured data. Here’s how it compares to other methods:

| Method | Reliability | Complexity | Semantic Quality | Best For |

|---|---|---|---|---|

| Naive Prompting | Low | Low | High | Quick prototypes, non-critical data |

| Prompt Engineering + Parsing | Medium | Medium | High | Simple structures, flexible formats |

| JSON Mode (API) | High | Low | Medium | Cloud-based APIs with native support |

| Function Calling | High | Medium | Medium | Tool use, API integrations |

| Schema-Constrained Generation | Very High | High | Variable | Critical pipelines, strict data validation |

| AST Parsing / Retries | Medium | High | High | Fallback mechanisms, complex repairs |

When to Use Schema-Constrained Prompts

You should reach for schema constraints when reliability is non-negotiable. Common use cases include:

- User Profile Generation: Extracting name, email, and age from unstructured text into a database-ready format.

- Resume Parsing: Converting diverse resume formats into a standardized JSON structure for HR systems.

- Data Extraction: Pulling specific entities (dates, prices, locations) from documents for analysis.

- API Response Formatting: Ensuring backend services receive exactly the payload shape they expect.

If your application can tolerate occasional parsing errors, simpler methods like prompt engineering with clear examples might suffice. But if a failed parse means lost revenue or corrupted data, schema constraints are worth the setup effort.

Best Practices for Implementation

To get the most out of schema-constrained generation, follow these guidelines:

- Keep Schemas Simple: Avoid overly complex nested structures if possible. Simpler schemas are easier for models to navigate and less prone to edge-case failures.

- Validate Semantics Separately: Since constraints only check structure, add a secondary validation layer to check for logical consistency (e.g., ensuring dates are in the past).

- Use Larger Models: Smaller models often lack the contextual understanding to fill schema fields accurately. Reserve constrained generation for models with sufficient parameter counts.

- Test Edge Cases: Try inputs that might confuse the model, such as missing information or ambiguous contexts, to see how the constraint handles gaps.

- Monitor Token Usage: Be aware that schema definitions consume context. Optimize your schemas to remove unnecessary comments or redundant definitions.

What is the difference between JSON mode and schema-constrained generation?

JSON mode is a feature provided by some LLM APIs that forces the model to output valid JSON. However, it doesn't enforce a specific schema-it just ensures the syntax is correct. Schema-constrained generation goes further by enforcing specific fields, data types, and structures defined in a JSON schema, providing much tighter control over the output format.

Can schema constraints prevent hallucinations?

No. Schema constraints only enforce structural validity. They ensure the output is valid JSON that matches your schema, but they cannot verify if the content is factually correct or logically sound. A model can still hallucinate values within the allowed structure.

Is schema-constrained generation slower than normal prompting?

Yes, typically. The additional step of filtering tokens against a Finite State Machine adds computational overhead. The impact varies depending on the complexity of the schema and the size of the model, but you should expect a noticeable increase in latency compared to unconstrained generation.

Which libraries support schema-constrained generation for local LLMs?

Popular options include the local-llm-function-calling library, which integrates with HuggingFace models, and Datasette’s LLM plugin. These tools handle the conversion of JSON schemas into grammars and manage the token filtering process for you.

What happens if the model cannot satisfy the schema?

If the model runs out of valid tokens to choose from (a dead end in the Finite State Machine), the generation process will halt or return an incomplete result. This is rare with well-designed schemas but can happen with overly restrictive constraints or insufficient context in the prompt.