To understand the scale, we have to look at Generative AI is a branch of machine learning that creates new content, such as text or images, using models with billions of parameters. While these tools are incredibly useful, they are among the most energy-intensive applications ever deployed. We aren't just talking about a few extra servers; we're talking about a fundamental shift in how much power the internet requires to function.

The Electricity Hunger: From Training to Inference

There are two distinct stages where AI burns through power: training and inference. Training is the 'schooling' phase. To build a model like GPT-4, thousands of GPUs is Graphics Processing Units, specialized hardware designed to handle the massive parallel calculations required for AI training, must run at full tilt for months. For example, training GPT-3 consumed about 1,287 megawatt-hours of electricity. To put that in perspective, that's like powering 120 average American homes for an entire year just to get one model up and running.

But the real stealth cost is inference-the act of actually using the AI. Every time you ask an AI to write an email or generate a picture, it triggers a burst of computation. Research from MIT suggests that a single query to a generative AI can consume five to ten times more electricity than a traditional Google search. When you multiply that by millions of users daily, the energy demand skyrockets. In North America alone, data center power requirements nearly doubled between 2022 and 2023, jumping from 2,688 megawatts to 5,341 megawatts.

| Action | Energy Relative Scale | Primary Driver |

|---|---|---|

| Standard Web Search | 1x (Baseline) | Index retrieval |

| AI Chat Query | 5x - 10x | Token generation & neural network activation |

| Model Training | Millions of times higher | Continuous GPU clusters for months |

The Invisible Thirst: Water Consumption

We often talk about electricity, but AI is also incredibly thirsty. Data centers generate an immense amount of heat. To keep those thousands of GPUs from melting, operators use advanced cooling systems. Many of these systems rely on evaporating water to pull heat away from the hardware.

This creates a localized environmental crisis. When a massive AI data center opens in a region already struggling with drought, it competes with farmers and residents for the same limited water supply. While companies are trying to move toward 'closed-loop' cooling (where water is recycled), the sheer scale of current AI growth is outpacing the infrastructure needed to make these centers water-neutral.

Carbon Footprints and the Fossil Fuel Trap

If you power a data center with coal or gas, the AI's carbon footprint is staggering. Training the

BLOOM AI is

a large-scale open-access multilingual language model, emitted ten times more greenhouse gases than the average yearly output of a person living in France.

There is also the 'hidden' carbon cost: the devices we use to access these models. Research indicates that the user terminals-your laptop, smartphone, or tablet-account for 25% to 45% of the total carbon footprint of some AI models. This means the pollution isn't just happening at the data center; it's happening in your hand.

The biggest hurdle is the stability of the power grid. Renewable Energy is energy from sources that are not depleted when used, such as wind and solar power, is often intermittent. AI models, however, need a constant, unwavering stream of power. Because wind and solar can fluctuate, many companies are forced to rely on fossil fuel-based plants to ensure their AI doesn't go offline, effectively cancelling out their 'green' promises.

Hardware Waste and Rare Earth Mining

The AI race is a hardware race. To stay competitive, companies are constantly upgrading to the latest, fastest chips. This leads to a brutal cycle of obsolescence. High-performance computing components have a short lifespan before they are replaced by a newer, more efficient version. The result is a mountain of electronic waste (e-waste) that is difficult to recycle.

Furthermore, these chips require rare earth minerals. The extraction of these materials often involves destructive mining practices that strip the land and pollute local water sources. We are essentially trading geological stability for faster chatbots.

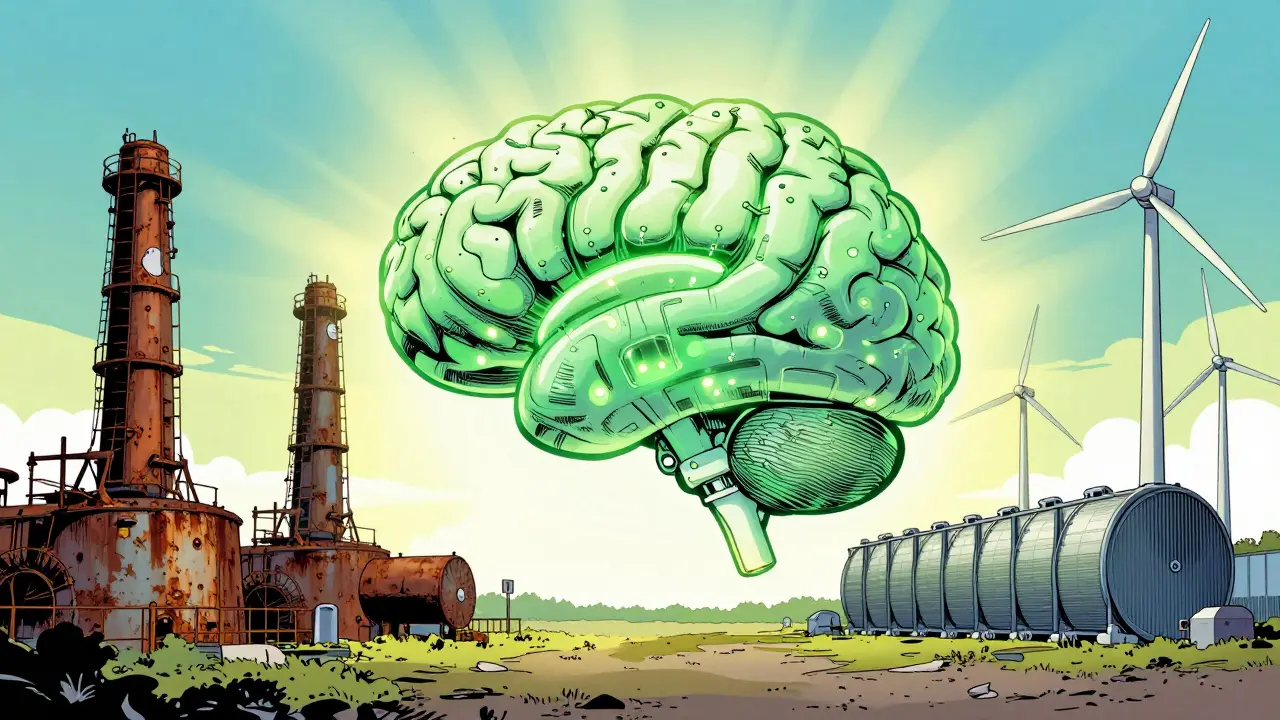

Can We Fix It? Paths to Greener AI

It isn't all doom and gloom. There are real technical pivots that could lower the impact. One promising area is the move toward

Neuromorphic Chips is

computer chips designed to mimic the neural structure of the human brain to process information more efficiently. These, and other AI-specific accelerators, could drastically reduce the energy needed per calculation.

Another strategy is model optimization-making models smaller and more efficient so they don't require as much compute. However, there is a catch called the 'rebound effect.' When we make AI cheaper and faster to run, people simply use it more, which can end up increasing the total energy consumption anyway.

To actually move the needle, we need a combination of the following:

- Strict Guardrails: Integrating sustainability metrics directly into how models are developed.

- Grid Modernization: Building massive energy storage (like giant batteries) to make renewable energy stable enough for data centers.

- Responsible Deployment: Encouraging users to use AI only when necessary rather than for trivial tasks.

Why does AI use so much more energy than a Google search?

A Google search mostly retrieves existing information from an index. Generative AI, however, must 'think' and generate a unique response token by token, activating billions of parameters across a massive neural network for every single word it produces. This requires far more computational power and electricity.

Is there a way to use AI more sustainably?

Yes. You can use smaller, specialized models instead of massive general-purpose ones. Additionally, avoiding unnecessary requests and supporting companies that use verified carbon-free energy sources helps reduce the overall footprint.

What is the 'rebound effect' in AI efficiency?

The rebound effect occurs when an increase in efficiency makes a resource cheaper or more accessible, leading to a surge in demand that wipes out the initial energy savings. In AI, as models become more efficient to run, they are integrated into more apps and services, increasing total energy use.

How does water cooling work in data centers?

Data centers use water to absorb heat from servers. In evaporative cooling, water is evaporated to cool the air around the hardware. This process consumes vast quantities of water, which is then lost to the atmosphere, creating significant strain on local water tables.

Will future AI hardware solve the energy problem?

Hardware like neuromorphic chips and optical processors could significantly lower energy use. However, hardware alone isn't a silver bullet; we also need a transition to stable renewable energy and more mindful model design to see a net decrease in environmental impact.

Next Steps for AI Users and Developers

If you're a developer, start by auditing your model's compute requirements. Could a smaller, distilled model do the job just as well? Using 'sparse' models that only activate a fraction of their parameters can save a surprising amount of energy.

For the everyday user, the best approach is intentionality. Be mindful of the energy cost of a high-resolution AI image or a complex long-form generation. By demanding transparency in carbon and water reporting from AI providers, we can push the industry toward a future where intelligence doesn't come at the cost of the planet.