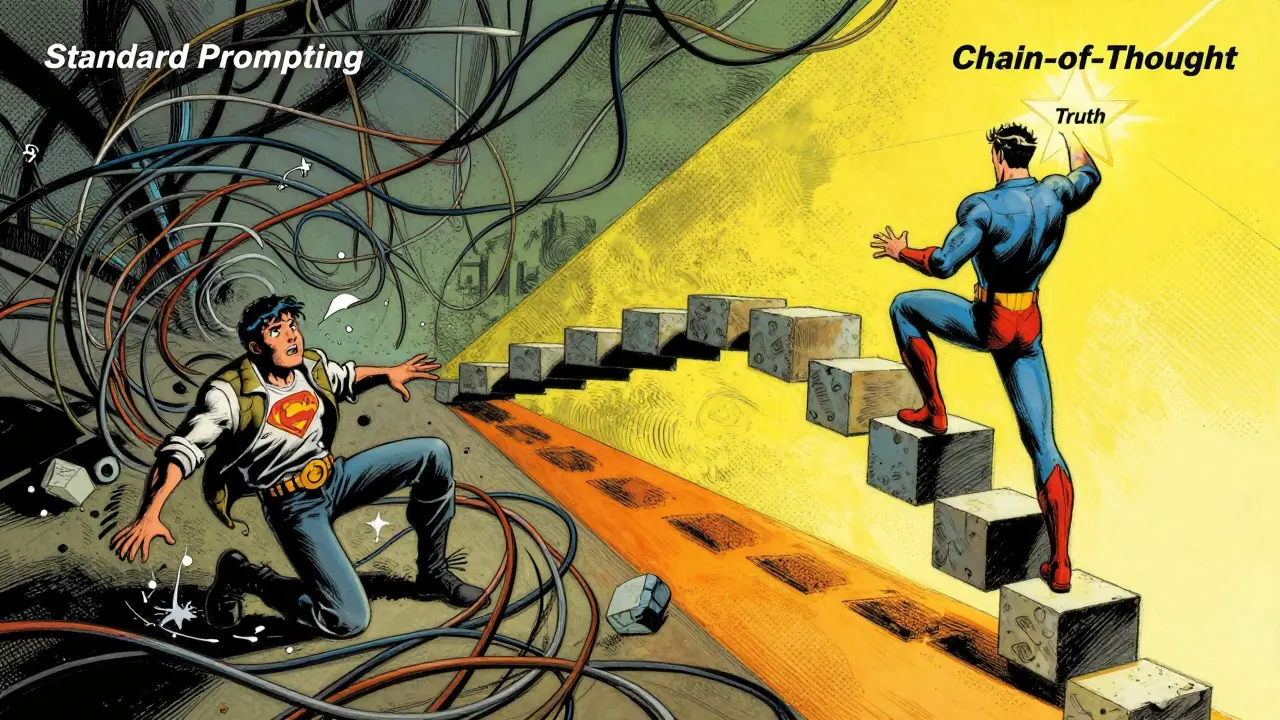

Have you ever asked an AI a tricky question and gotten a confident but completely wrong answer? It happens more often than you’d think. Large language models are great at recalling facts, but they struggle when a problem requires multi-step logic, like solving a math word problem or debugging complex code. This is where Chain-of-Thought (CoT) Prompting comes in.

Instead of demanding a direct answer, CoT prompts the model to "show its work." By forcing the AI to articulate intermediate reasoning steps before concluding, you dramatically improve accuracy on complex tasks. Introduced by Google Research in January 2022, this technique has become a cornerstone of modern prompt engineering. In this guide, we’ll break down how Chain-of-Thought prompting works, why it matters, and how you can implement it effectively in your own projects without burning through your API budget.

How Chain-of-Thought Prompting Works

To understand CoT, imagine asking a human student to solve a difficult equation. If you just ask for the final number, they might guess. But if you say, "Walk me through your thinking step by step," they are far more likely to catch their own errors along the way. Chain-of-Thought prompting mimics this cognitive process for large language models (LLMs).

Standard prompting relies on the model’s next-token prediction to jump straight to an answer. CoT changes the game by structuring the input to trigger the model’s latent reasoning abilities. According to NVIDIA’s technical documentation from November 2024, appending simple instructions like "Describe your reasoning in steps" activates a sequential logical output. The model doesn’t just retrieve information; it simulates a thought process.

The architecture typically follows four stages:

- Problem Understanding: The model interprets the initial query.

- Intermediate Reasoning: The model generates sequential logical steps (the "chain").

- Final Answer: The model synthesizes the reasoning into a conclusion.

- Feedback Loop: Optional refinement where the model checks its own logic.

This is different from prompt chaining, which involves multiple back-and-forth exchanges between user and bot. CoT produces the entire reasoning sequence in a single response, reducing interaction overhead by roughly 34% for complex analytical tasks.

Types of Chain-of-Thought Prompting

Not all CoT implementations are created equal. Depending on your task complexity and available data, you’ll choose one of three main approaches. Each has distinct trade-offs regarding effort, cost, and accuracy.

| Type | Description | Best For | Effort Level |

|---|---|---|---|

| Zero-Shot CoT | Appending generic instructions like "Let's think step by step" without examples. | Simpler tasks, quick experiments. | Low (0.5-2 hours) |

| Few-Shot CoT | Providing 2-5 example reasoning chains in the prompt context. | Complex reasoning, specific domain formats. | Medium (3-8 hours) |

| Auto-CoT | Model determines optimal steps automatically without manual examples. | Scalable applications, diverse queries. | High (initial setup) |

Zero-Shot CoT is the easiest entry point. You simply add phrases like "Think carefully before answering" to your prompt. While less precise, it requires no manual curation. Few-Shot CoT is the gold standard for accuracy. By showing the model exactly how you want it to reason-using 2 to 5 high-quality examples-you guide its internal pattern matching. However, crafting these examples takes time. Automatic Chain-of-Thought (Auto-CoT), introduced by Zhang et al. in May 2023, bridges the gap. It allows the model to determine the best step-by-step approach autonomously, maintaining about 89.3% of the effectiveness of manually crafted few-shot prompts while reducing engineering overhead.

Performance Gains and Limitations

Why bother with the extra tokens? The numbers speak for themselves. A comprehensive analysis published in the Journal of Artificial Intelligence Research (October 2024) found that CoT outperforms standard prompting by an average of 39.7% on multi-step mathematical problems. On commonsense reasoning tasks, it improved results by 27.3%. Dr. Percy Liang, Director of Stanford's Center for Research on Foundation Models, called CoT "one of the most significant practical advances in making LLMs transparent since instruction tuning."

However, there’s a catch. CoT isn’t magic, and it comes with costs:

- Token Usage: Expect a 35-60% increase in token consumption compared to standard prompting. Anthropic’s Q2 2024 API analytics confirmed this surge.

- Latency: Responses take longer. NVIDIA benchmarks show an average delay of 220-350ms per request.

- Reasoning Hallucination: The model can generate plausible-sounding but incorrect logic. A University of Washington study (September 2024) noted error rates of 18.7% in CoT implementations versus 12.3% in standard prompting for certain complex tasks.

Also, keep in mind that model size matters. CoT shines with large models. Research shows that models with fewer than 10 billion parameters see minimal improvement (average 6.8%), while those exceeding 50 billion parameters demonstrate dramatic gains (average 42.1%). If you’re using a small local model, CoT might not be worth the extra compute.

Implementing CoT: Best Practices

Getting Chain-of-Thought right requires more than just adding words to a prompt. Here are practical strategies to maximize reliability and minimize waste.

1. Control the Length of the Chain

Unrestricted reasoning can lead to "reasoning drift," where the model loses track of the original question. Stanford research suggests limiting reasoning steps to 3-7 steps. Use explicit constraints in your system prompt, such as "Limit your explanation to five logical steps." This keeps responses concise and focused.

2. Add Verification Steps

To combat hallucinations, instruct the model to verify its own work. OpenAI’s July 2024 best practices guide recommends adding a verification prompt at each step. For example: "After calculating step 1, check if the result makes sense before proceeding to step 2." This self-correction mechanism significantly boosts trustworthiness.

3. Use Self-Consistency for Critical Tasks

For high-stakes applications like financial analysis or legal review, don’t rely on a single chain. Microsoft Research’s September 2024 study verified that generating multiple reasoning chains and selecting the most frequent answer (Self-Consistency CoT) improves accuracy by 12-18%. Yes, it adds 35-60% to processing time, but the reliability gain is often essential.

4. Tailor Prompts to Your Model Provider

Not all models respond to CoT equally. Developers report that Anthropic’s Claude models handle CoT natively with minimal tuning. Meta’s Llama 3, however, often requires significant prompt adjustments to produce coherent step-by-step reasoning. Test thoroughly across providers before deploying to production.

The Future of Structured Reasoning

Chain-of-Thought is evolving rapidly. We’re moving beyond linear chains into more complex structures. Tree-of-Thought (TOT) prompting, explored by Princeton University in November 2024, allows the model to explore multiple reasoning pathways simultaneously, showing 28% higher accuracy on planning tasks. Graph-of-Thought (GOT) structures reasoning as interconnected nodes, improving performance on knowledge-intensive tasks by 34%.

Regulatory landscapes are also shifting. The EU AI Office’s October 2024 guidance mandates audit trails for complete reasoning chains in high-risk applications. This means companies must store and document the "why" behind every AI decision, not just the outcome. With 78% of organizations now incorporating some form of CoT (up from 32% in early 2024), compliance is becoming a key driver of adoption.

As we move toward 2026, expect CoT to become a default feature rather than a specialized technique. Tools like Verifiable CoT from Anthropic will automatically cross-check reasoning against trusted sources, reducing the burden on developers. The goal remains the same: making AI reasoning transparent, reliable, and understandable to humans.

What is the difference between Zero-Shot and Few-Shot Chain-of-Thought?

Zero-Shot CoT uses generic instructions (e.g., "think step by step") without providing examples, making it easier to implement but less accurate. Few-Shot CoT includes 2-5 specific examples of desired reasoning patterns in the prompt, leading to higher accuracy but requiring more time to craft and maintain.

Does Chain-of-Thought prompting work well with small language models?

Generally, no. Research indicates that models with fewer than 10 billion parameters show minimal improvement (around 6.8%) with CoT. The technique is most effective with larger models (50+ billion parameters) that have sufficient capacity to simulate complex reasoning chains.

How much does CoT increase API costs?

Expect a 35-60% increase in token usage due to the verbose nature of step-by-step explanations. AWS case studies from late 2024 reported average cost increases of 42% per query for enterprise implementations. You should optimize by limiting step counts and truncating unnecessary details.

What is "reasoning hallucination" in CoT?

Reasoning hallucination occurs when the model generates a logical-looking chain of steps that contains factual errors or non-sequiturs. Because the steps sound plausible, users may trust the final answer even if the underlying logic is flawed. Verification prompts and self-consistency methods help mitigate this risk.

Is Chain-of-Thought prompting required by law in Europe?

While not explicitly named "Chain-of-Thought" in legislation, the EU AI Office’s October 2024 guidance requires audit trails for reasoning in high-risk AI systems. This effectively mandates that developers preserve and document the logical steps taken by the model, aligning closely with CoT principles.