Imagine your marketing team building a customer-facing app in an afternoon using nothing but natural language prompts. It works. It looks great. But does it leak data? Can you audit who approved its logic? This is the reality of vibe coding, an AI-driven software development approach where developers describe desired functionalities in natural language prompts, with AI systems generating corresponding code. As this method accelerates from hobbyist experiments to enterprise production lines, the old rules of software governance are breaking down.

The core problem isn't that vibe coding is dangerous-it's that traditional governance was built for human-written code with clear accountability chains. When an AI generates thousands of lines of code in seconds, asking a senior engineer to manually review every commit is impossible. Yet, ignoring the risk invites brittle, undocumented tools that cannot be maintained or trusted. The solution lies in tiered governance, a framework for managing risk in AI-assisted software development by aligning control mechanisms with the potential impact and risk profile of different components. This approach matches strict controls to high-risk areas while allowing speed in low-risk zones.

The Three Layers of Trust Architecture

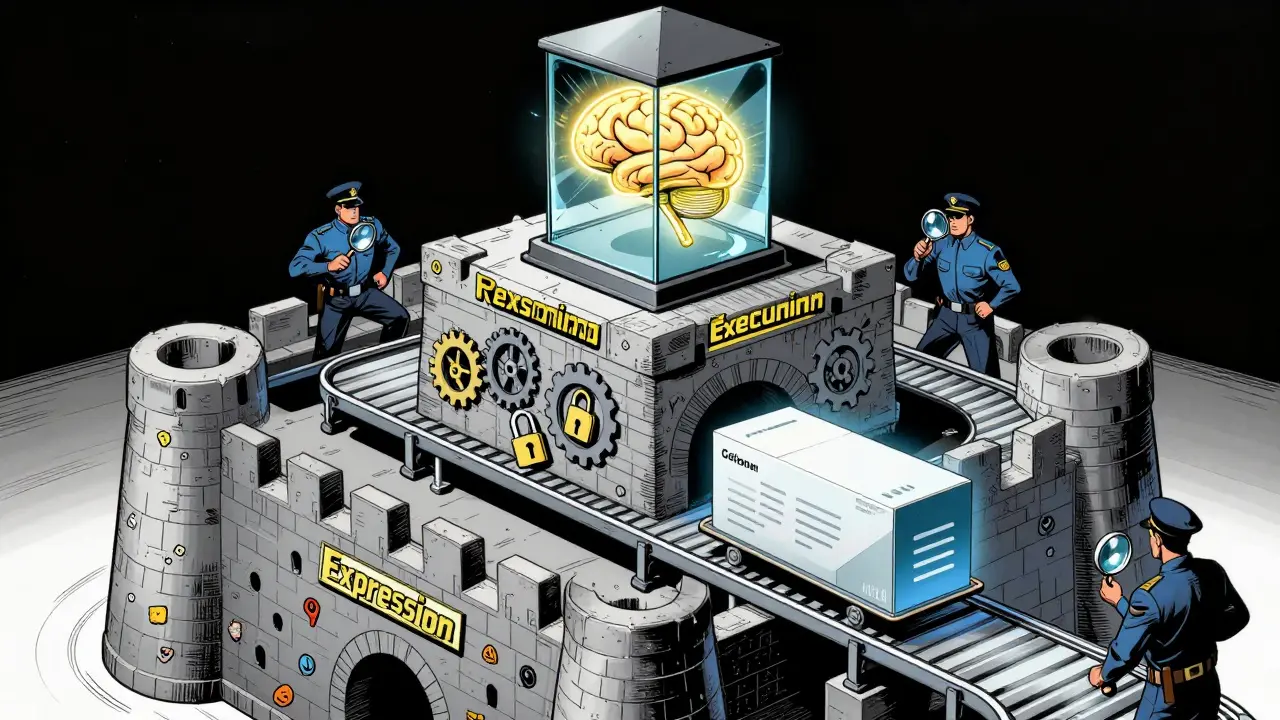

To govern vibe-coded apps effectively, you need more than just a checklist; you need a structural shift. Research into enterprise deployments highlights three integrated platforms that form the backbone of this architecture. None can stand alone because each protects a different dimension of trust.

- The Vibe Coding Platform (Expression): This is where the work begins. It allows non-developers to sketch how work should look and feel using natural language and drag-and-drop logic. Here, the AI does heavy lifting invisibly. The goal is accessibility and rapid ideation.

- The Workflow Platform (Execution): Once the idea is formed, it needs repeatable motion that can be verified and audited. This layer ensures consistent behavior and accountability. It applies role-based permissions, policy checks, and human approval gates before any business action occurs.

- The AI Workspace (Reasoning): This is the black box made transparent. It logs the prompts, models, and evidence that guide intelligent behavior. Every time a vibe-coded app calls an AI function, it happens here, creating a reviewable trail of why the AI decided what it did.

The connective tissue between these layers is critical. When a vibe-coded application proposes a change, it doesn't just happen. It flows through the AI Workspace for logging, then through the Workflow Platform for permission checks. The AI can propose or decide, but it can never act outside its governed perimeter. This explicit boundary between AI reasoning and operational action is where safety lives.

Risk Tiering: Not All Code Is Created Equal

Applying the same level of scrutiny to a internal dashboard widget as you do to a payment processing module is inefficient and stifling. Tiered governance embeds risk assessment directly into the system rather than treating it as a side project. Every validation flow maps to a decision ladder based on impact level.

| Risk Tier | Example Use Case | Required Controls | Human Review Level |

|---|---|---|---|

| Tier 1: Low Risk | Internal note-taking app, personal productivity tool | Automated syntax checks, basic security scan | Lightweight or none if confidence is high |

| Tier 2: Medium Risk | Customer support chatbot, internal reporting dashboard | Behavioral testing, API rate limiting, data privacy check | Spot-check by technical lead |

| Tier 3: High Risk | Patient records access, financial transaction processing | Full code audit, penetration testing, compliance verification | Mandatory expert review + legal sign-off |

This rhythm balances automation with judgment. In Tier 1, you want velocity. In Tier 3, you want certainty. The governance framework remembers what it learned about risk assessment and implements those decisions through technical controls-like automated gates that block deployment if specific compliance flags aren't met-rather than relying on manual memory.

Policy-as-Code: Making Rules Executable

One of the biggest failures in early AI adoption was documenting policies in PDFs that no one read. For vibe-coded apps, policy-as-code, translating governance requirements into executable code rather than documentation is not just a best practice; it's a necessity.

Instead of writing a rule that says "all user data must be encrypted," you write a script that scans the generated codebase and fails the build if encryption libraries aren't present. This shifts governance from a post-deployment audit to a pre-deployment guardrail. It also solves the scale problem. You can have hundreds of teams vibe-coding simultaneously, and the policy-as-code engine enforces consistency across all of them without needing a hundred security engineers.

This approach also helps with secrets management. A common risk in vibe coding is that developers might accidentally paste API keys or credentials into their prompts. Policy-as-code tools can detect patterns resembling secrets in both the prompt history and the generated output, flagging them immediately before they reach production.

Behavioral Monitoring Over Traditional QA

Traditional software quality assurance focuses on code quality: Are there bugs? Does it crash? For vibe-coded applications, this is insufficient. Generated code can be syntactically perfect but logically flawed or biased. Therefore, monitoring must shift toward behavioral metrics.

You need to track:

- Task Completion Rates: Does the AI-generated feature actually help users finish their jobs?

- Error Recovery Patterns: How does the system behave when it encounters unexpected input?

- User Sentiment: Are users frustrated by the interface or confused by the outputs?

- Time-to-Value: How quickly does the new feature deliver benefit compared to traditional development?

Implementation of staged rollouts is key here. Release vibe-coded features to small user cohorts first. Track detailed interaction patterns and compare how different user groups engage with the AI-generated features. If a revenue team prototypes a renewal-risk cockpit, monitor whether the signals it surfaces are verifiable and accurate before rolling it out to the entire sales organization. This comparison of AI-generated versus traditionally-coded solutions reveals whether the code performs consistently across contexts.

The Human-in-the-Loop Verification Cycle

Trust is built through iterative verification, not blind acceptance. Even with tiered governance, humans must remain in the loop, especially for higher-risk tiers. However, the nature of this review changes. Instead of reading every line of code, experts review implementation plans and architectural decisions.

In many modern frameworks, the AI agent generates an implementation_plan.md artifact-a technical blueprint detailing exactly which files will be created or modified and what logic will be used. Users can review these plans, leave comments, or request different approaches (e.g., "use React Query instead of Redux"). The agent adjusts its strategy before proceeding. This planning mode allows for complex architecture discussions, while a "fast mode" handles quick edits. Security operations leads often mock up triage panels that show the model's recommendation alongside the sources it relied on, providing explicit approve or escalate paths.

Security-Specific Risks in Vibe Coding

Vibe coding introduces unique security vectors that traditional scanning misses. Because the code is generated, it may include obscure libraries or unconventional logic structures that static analysis tools don't recognize. Security-aware code review must be specifically trained on AI-generated patterns.

Furthermore, access controls must be tightened around the vibe coding tools themselves. If a malicious actor gains access to a corporate vibe coding platform, they could generate malicious code at scale, bypassing some traditional detection methods because the code looks "clean" and machine-generated. Environment management must isolate these development environments from production databases to prevent accidental data exfiltration during the generation phase.

What is vibe coding?

Vibe coding is an AI-driven software development approach where developers describe desired functionalities in natural language prompts. The AI system then generates the corresponding code, UI, backend logic, and file structure. It transforms development from writing syntax to directing intent.

Why do we need tiered governance for AI-generated apps?

Traditional governance models were designed for human-written code with clear accountability. Vibe coding creates transparency gaps and accelerates deployment speed beyond manual review capabilities. Tiered governance aligns control intensity with risk levels, ensuring high-security areas are protected while low-risk projects maintain agility.

How does policy-as-code improve AI governance?

Policy-as-code translates governance rules into executable scripts that automatically check generated code against security and compliance standards. This ensures consistent enforcement at scale, prevents human error, and blocks risky deployments before they reach production.

What are the three platforms in tiered governance architecture?

The three platforms are the Vibe Coding Platform (for expression and ideation), the Workflow Platform (for execution and auditing), and the AI Workspace (for reasoning and logging). Together, they create a closed loop of trust, accountability, and transparency.

Is vibe coding safe for enterprise use?

Yes, but only with proper governance. Without controls, it produces brittle and untrusted tools. With tiered governance, policy-as-code, and human-in-the-loop verification, enterprises can safely leverage the speed and democratization of vibe coding while mitigating security and compliance risks.