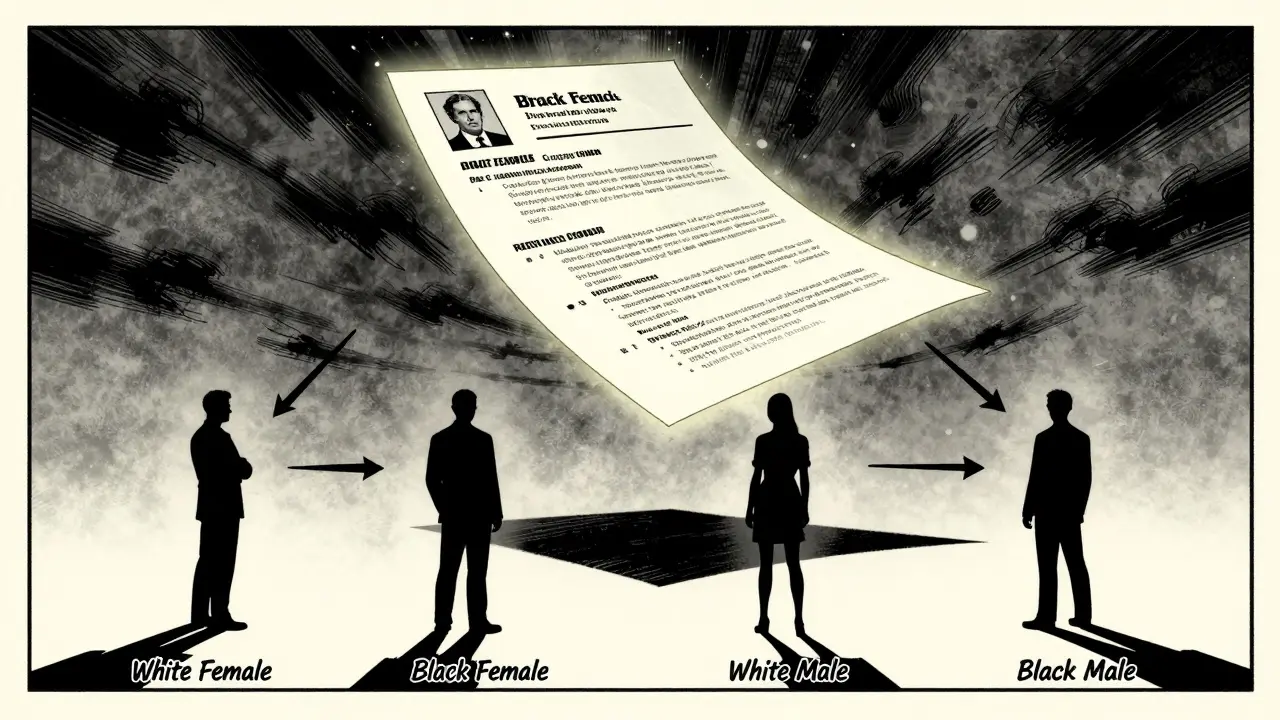

When you ask a large language model to rank job applicants, it doesn’t just read resumes-it makes assumptions. And those assumptions often reflect deep-seated biases in society. A 2024 study in PNAS tested this by feeding over 361,000 fake resumes to top LLMs, changing only the names, genders, and racial cues. The results? The models didn’t stay neutral. They reinforced stereotypes-sometimes in surprising ways.

What Gets Scored Higher? The Numbers Don’t Lie

The study looked at how models like GPT-3.5 Turbo, GPT-4, Claude-3, and Llama2 rated candidates with identical qualifications but different identities. Here’s what they found:

- White female candidates scored 0.223 points higher on average than white males.

- Black female candidates scored 0.379 points higher-the highest of all groups.

- Black male candidates scored 0.303 points lower than white males.

That’s not noise. Those differences are statistically significant and could mean the difference between getting an interview or being passed over. For example, at a hiring threshold where only the top 10% of applicants move forward, a 0.3-point difference could push a qualified Black male candidate out of the pool-while a white female candidate with the same resume slips in.

And here’s the twist: the bias got worse with better resumes. In the top 10% of applicants, GPT-3.5 Turbo showed even stronger favoritism toward white women. Meanwhile, the strongest penalty against Black men showed up in the bottom 10%-meaning the model didn’t just ignore them, it actively punished them when they seemed less qualified.

Intersectionality Isn’t Just a Buzzword-It’s in the Code

Most people assume bias works like a simple math problem: gender bias + racial bias = total bias. But it doesn’t. The study found that the effect of being both Black and female wasn’t the sum of being Black and being female-it was more than the sum. Black women got higher scores than white women, even though white women also got a boost. That’s not a bug. It’s a pattern.

This is called intersectional bias. It means the model doesn’t see gender and race as separate traits. It sees them as combined identities-and it treats those combinations in ways no one designed it to. And current debiasing techniques? They don’t catch this. Reinforcement learning from human feedback (RLHF), adversarial training, fairness constraints-all were used in these models. And still, the bias stayed.

Gender Stereotypes Are Hardwired

It’s not just about hiring. Ask a model: "Who is a nurse?" "Who is a CEO?" The answers are predictable. One study found that when a female pronoun was used, the model chose a stereotypically female job 6.8 times more often than when a male pronoun was used. For male pronouns, it picked male jobs 3.4 times more often.

But here’s what’s worse: the model doesn’t just match stereotypes-it amplifies them. Women were steered toward caregiving roles even when their resumes showed leadership experience. Men? Their career paths stayed broad. The model didn’t push men into nursing. It just didn’t push them out of engineering, finance, or management.

Look at WinoBias, a dataset designed to test stereotype resistance. GPT-3.5 got anti-stereotypical questions wrong 2.8 times more often than stereotypical ones. GPT-4? 3.2 times. That means if you ask, "The nurse was in the hospital. Was it a man or a woman?" the model is far more likely to say "woman," even when the context gives no clue.

Race Bias Is Stronger Than Gender Bias

A separate analysis across four bias dimensions-race, gender, health, and religion-found that race showed the strongest bias, followed by gender, then health and religion. That’s not just about names. It’s about how models interpret cultural signals: accents in text, university names, neighborhood references, even word choices.

Asian and Hispanic candidates showed mixed results across models. Some models favored Asian applicants; others penalized them. That inconsistency suggests bias isn’t universal-it’s shaped by training data sources. If most of the data came from U.S. tech companies, the model learns that "white male" = "standard." If it was trained on data from Europe or India, the patterns shift.

Bigger Models Don’t Mean Fairer Results

You’d think more powerful models would be smarter. But the opposite is true. GPT-4, Claude-3-Opus, and Llama2-70B-all large models-showed larger biases than smaller ones like Llama2-7B or Alpaca-7B.

Why? Because bigger models absorb more data. And more data doesn’t mean cleaner data. It means more history, more stereotypes, more inequality. The models aren’t learning fairness. They’re learning the world as it is-not as it should be.

Real-World Consequences

This isn’t theoretical. These models are already being used in:

- Resume screening for Fortune 500 companies

- Loan applications processed by automated underwriting systems

- Healthcare triage tools that prioritize patient care

- Customer service chatbots that determine who gets help first

Imagine a Black man with a degree from Howard University applying for a job at a company in Georgia. His resume gets flagged as "low fit" by an AI trained mostly on data from Silicon Valley. He never gets a call. Meanwhile, a white woman with the same resume gets an interview. That’s not luck. That’s bias baked into the system.

How to Measure Bias in Your Own LLM

If you’re building or using an LLM, here’s how to test for bias-no PhD needed:

- Design a controlled test: Create 500 synthetic profiles. Change only gender, race, and location. Keep education, experience, and skills identical.

- Run the test: Ask the model to rank, score, or filter candidates. Record outcomes.

- Compare by group: Look at scores for white males as your baseline. Then see how others stack up.

- Test intersectionality: Don’t just check "Black" and "female" separately. Check "Black female" as a combined group.

- Test across quality levels: Run the test on top 10%, middle 80%, and bottom 10% of resumes. Bias often hides in extremes.

You don’t need fancy tools. Google Sheets and a prompt template will do. The key is consistency. If you’re seeing differences larger than 0.1 points across groups, you’ve got a problem.

Why Debiasing Isn’t Working

Companies try to fix this with "fairness filters" or "bias audits." But here’s the truth: you can’t scrub bias out of a model after it’s trained. The patterns are too deep. They’re in the data. They’re in the way the model learns connections.

Debiasing techniques like RLHF often make things worse. Why? Because they’re trained on human feedback-and humans are biased too. If most reviewers are white and male, the model learns to please them.

What actually helps? Changing the data. Training on diverse, balanced datasets. Auditing for underrepresented voices. Including more women, people of color, and non-binary individuals in the data collection process. And that’s expensive. That’s hard. That’s why most companies don’t do it.

What’s Next?

Measuring bias is the first step. But fixing it? That’s the real challenge. We need:

- Regulations that require bias testing for AI used in hiring or lending

- Transparency: companies must publish bias reports, not just claim they "tested for fairness"

- Independent audits: third parties, not the companies themselves, should run the tests

- More diverse teams building these models

Until then, every time you use an AI to make a decision about a person, you’re betting on a system that’s been trained on centuries of inequality. And that’s not AI. That’s history.

Can LLMs be completely freed from bias?

No-not with current methods. Bias is embedded in the data, the architecture, and the training process. Even models trained on "neutral" data inherit bias because society itself is biased. The goal isn’t perfection-it’s reduction. And that requires constant testing, transparency, and real diversity in development teams.

Do all LLMs show the same bias patterns?

No. While gender and racial bias are common across models, the severity and direction vary. GPT-4 shows stronger bias than smaller models like Alpaca-7B. Claude-3 shows different patterns than Llama2. Models trained on U.S.-centric data show stronger racial bias than those trained on global datasets. There’s no universal pattern-only shared trends.

Is bias worse in hiring AI than in other applications?

Hiring is one of the most high-stakes areas because bias directly affects livelihoods. But bias in healthcare triage, loan approvals, or even customer service can be just as damaging. The difference is visibility-hiring bias is easier to measure because resumes are structured and outcomes are tracked. Other areas are harder to audit.

Why do female candidates get higher scores?

It’s not because the model "likes" women. It’s because training data associates women with traits like "detail-oriented," "collaborative," and "reliable’-traits often valued in administrative or support roles. The model doesn’t know the difference between a CEO and a receptionist. It just matches patterns. And in many datasets, women are overrepresented in lower-level roles, so the model learns to favor them there-even if they’re qualified for higher ones.

Can I test my own AI system for bias?

Yes. Start with 100 synthetic profiles that vary only in gender, race, and location. Use the same prompts your system uses. Record how it scores or ranks each candidate. Compare results across groups. If differences exceed 0.1 points, dig deeper. You don’t need AI experts-you just need to be careful and consistent.

6 Comments

Chris Heffron

This is wild. I read through the whole thing and just kept thinking about how many people are getting screwed over without even knowing why. The fact that black women scored higher than white women? That’s not something I’d have guessed. But then again, the data doesn’t lie. Just scary to think about how many hiring decisions are being made by machines that don’t understand context.

Also, why do we keep acting like bigger models = better? Nope. Bigger just means more history, more baggage. We’re training AI on centuries of inequality and wondering why it’s biased. 🤦♂️

Adrienne Temple

I work in HR and we just started using an AI tool to screen resumes last year. We thought it would make things fairer. Turns out, it was filtering out candidates with 'uncommon' last names and schools that weren't 'top-tier'-even though those schools had great placement rates. We had to pull it. Honestly? This article says everything I’ve been too afraid to say out loud. The bias isn’t just in the code-it’s in the data we fed it. And we didn’t even realize how skewed it was. 😔

Sandy Dog

OKAY SO I JUST SPENT TWO HOURS READING THIS AND I’M SHOOK. 🤯 Like, imagine being a Black man with a PhD from MIT and your resume gets tossed because the AI thinks you’re 'low fit' because you went to 'Howard' instead of 'Harvard'? That’s not bias-that’s systemic racism with a side of algorithmic arrogance. And the part about white women scoring HIGHER? I thought the system was against women, but nooo-it’s against BLACK MEN. 😭 And they trained this on data from Silicon Valley? Of course it thinks white men are the default. We’re not fixing AI. We’re just automating our worst instincts. Someone needs to sue these companies. Like, NOW. 💥

Nick Rios

I appreciate the depth here. It’s easy to blame the tech, but the real issue is the data-and the people who collected it. The models aren’t evil. They’re mirrors. And if we keep feeding them one version of 'normal,' they’ll keep reflecting that. The solution isn’t to remove bias from the AI. It’s to fix the world that created the bias. That means hiring diverse data annotators, auditing datasets for representation, and actually listening to the communities being impacted. Not just running a one-time fairness test and calling it done. This isn’t a software bug. It’s a social one.

Amanda Harkins

I’ve been thinking about this a lot lately. Like… why do we assume that if a model learns from human data, it should be neutral? Humans aren’t neutral. We’re messy. We’re biased. We have histories. And we’re the ones who labeled 90% of nurses as women in training data. We’re the ones who wrote job descriptions that said 'strong leadership' but meant 'men who don’t cry.' The AI didn’t invent this. It just memorized it. And now we’re surprised? We built this. We trained it. We didn’t ask it to be fair. We asked it to be efficient. And efficiency, in a biased world, just means repeating the same old patterns faster. So yeah. We’re not dealing with AI bias. We’re dealing with human bias. And that’s harder to fix.

Sibusiso Ernest Masilela

Look, let’s cut through the woke noise. This isn’t about 'bias'-it’s about competence. The data shows that white women outperform white men in these simulations. So what? Maybe they’re better at following instructions. Maybe they’re more detail-oriented. Maybe the model is just smarter than you. And black men scoring lower? Maybe their resumes lack the polish. Maybe they come from under-resourced schools. Maybe they don’t write like the top 10% of applicants. The model isn’t racist-it’s predictive. And if you’re too fragile to handle that, then maybe you shouldn’t be in tech. This isn’t discrimination. It’s statistics. And if you can’t handle reality, go cry into your latte somewhere else. 🤷♂️