Tag: LLM bias

Tamara Weed, Mar, 12 2026

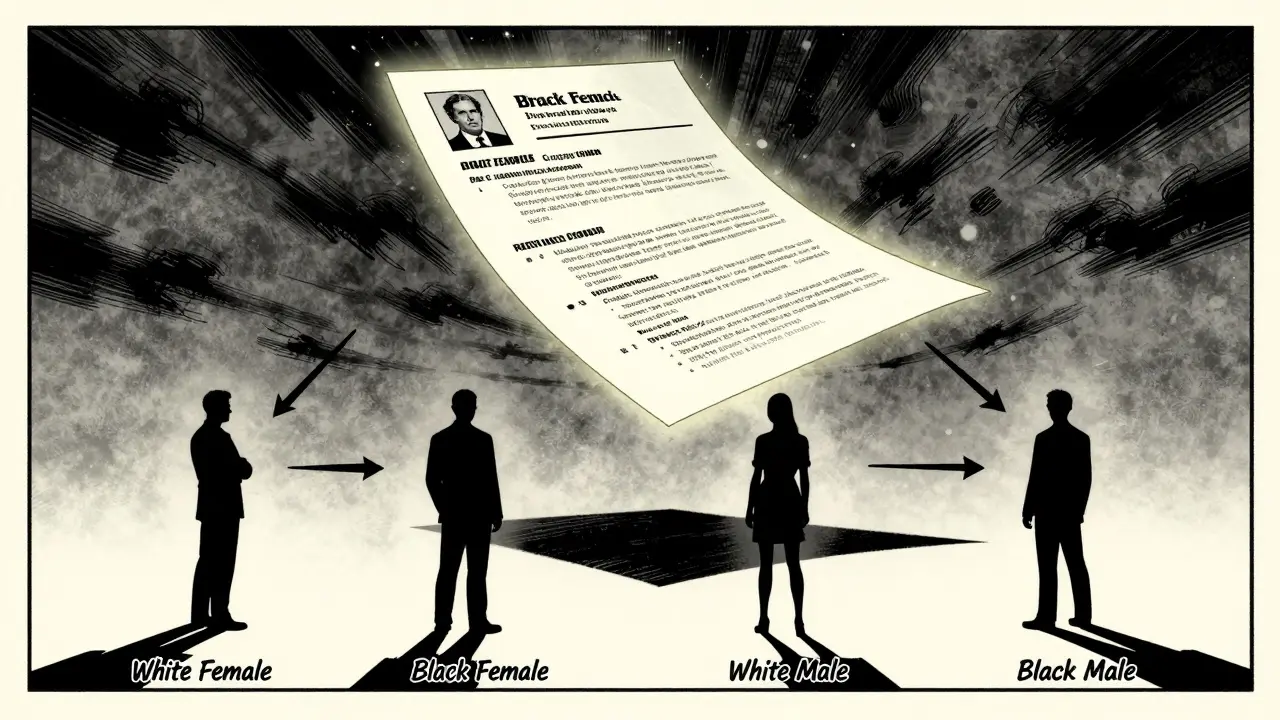

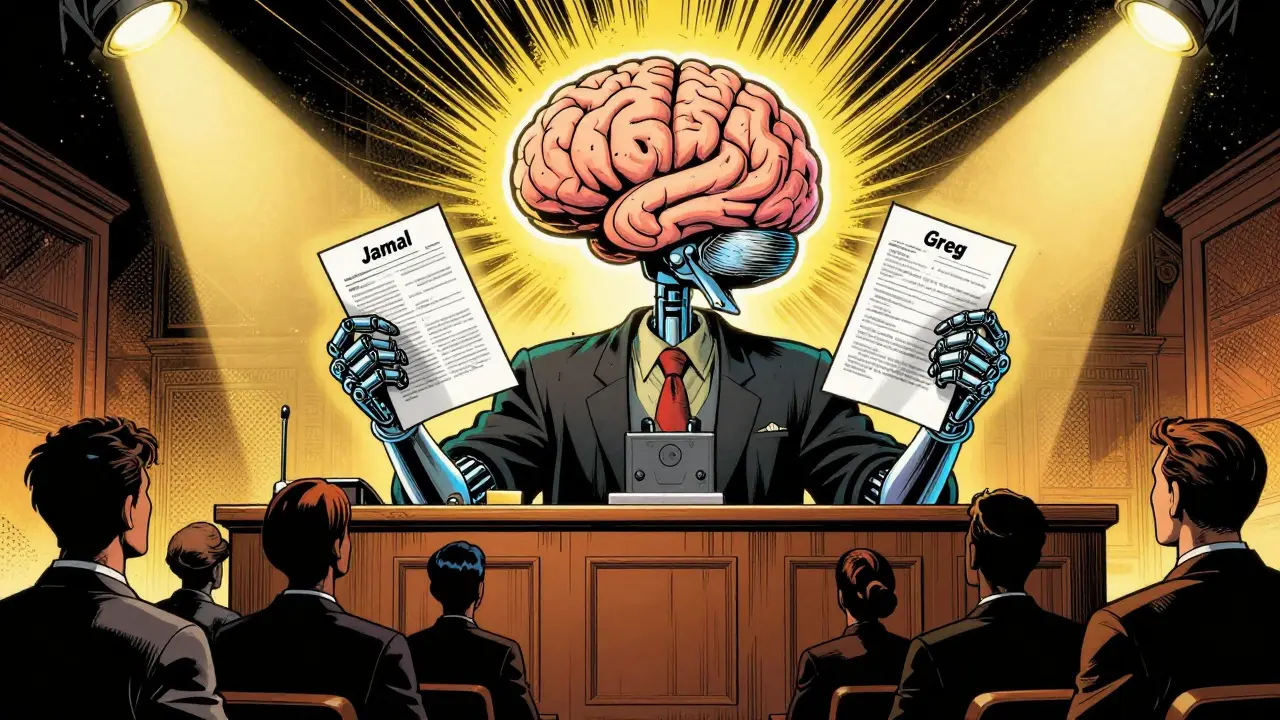

Large language models show measurable gender and racial bias in hiring and decision-making, favoring white women while penalizing Black men. Real-world testing reveals persistent, intersectional bias that current debiasing methods fail to fix.

Categories:

Tags:

Tamara Weed, Jan, 15 2026

Standardized protocols for measuring bias in large language models use audit tests, embedding analysis, and text evaluation to detect unfair patterns. Learn how these tools work, which ones are most effective, and how to start using them today.

Categories:

Tags: