Running a large language model (LLM) on a single GPU used to be a dream. Now, it’s a daily challenge for teams trying to deploy models like Llama 3 70B or GPT-4 without hitting an Out-of-Memory (OOM) crash. The problem isn’t just that models are big-it’s that the way they work makes memory usage explode as soon as you give them long inputs. A 4,096-token prompt might work fine. A 32,000-token prompt? That’s when your GPU runs out of memory, even if it’s a 40GB A100. This isn’t a bug. It’s the transformer architecture’s self-attention mechanism in action. It compares every token to every other token, and that means memory grows with the square of the input length. O(n²). For a 32K context, that’s over 1 billion attention pairs. No GPU can handle that without help.

Why Memory Planning Isn’t Optional Anymore

Before 2023, most teams tried to solve this by shrinking the model. Quantize weights from 16-bit to 8-bit. Or even 4-bit. That cuts memory use by 2x to 4x. But here’s the catch: you lose accuracy. Sometimes 5%. Sometimes 15%. And for tasks like legal document analysis or medical summarization, that drop matters. Teams started seeing OOM errors even on 80GB GPUs when users asked for long, complex queries. The solution wasn’t more VRAM. It was smarter memory use.

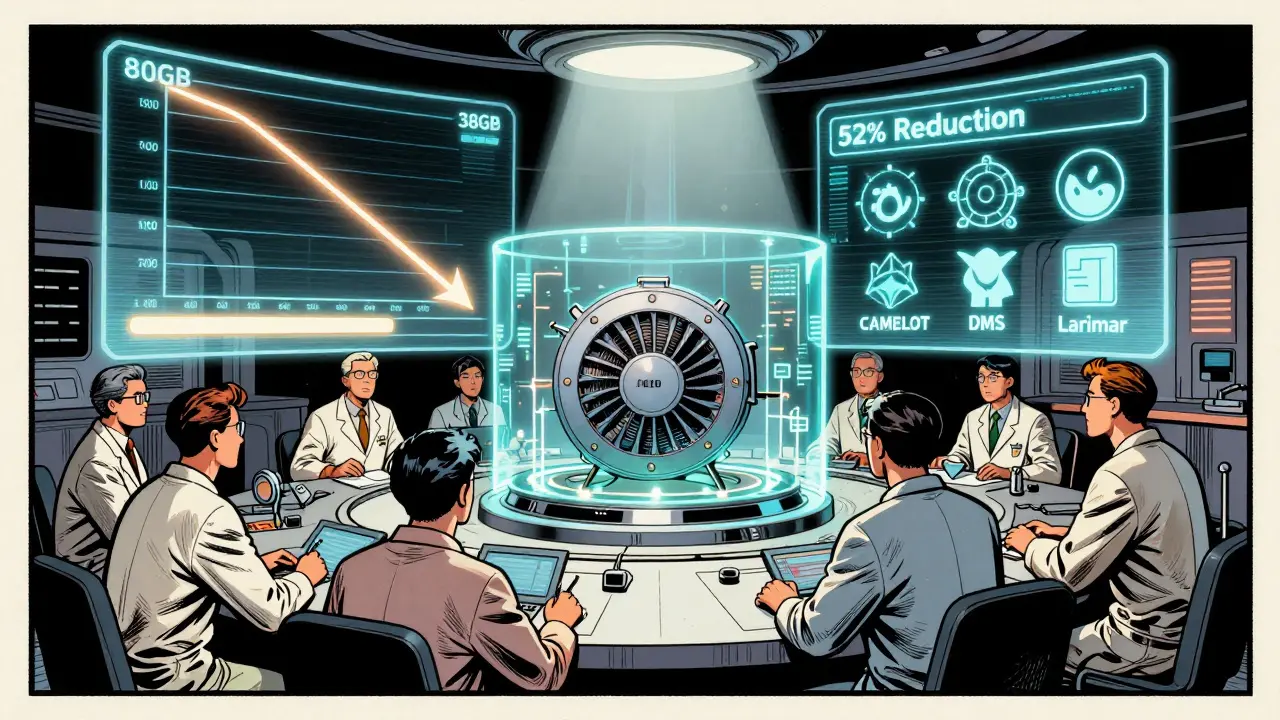

Memory planning means rethinking how data flows through the model during inference. It’s not about making the model smaller. It’s about making the memory usage smarter. IBM Research’s CAMELoT, introduced in 2023, does this by adding an associative memory module that acts like a short-term memory buffer. It remembers only the most important tokens from past context-those that are novel, recent, or tied to key concepts. It lets the model handle 64K-token inputs with the same memory footprint as a 4K-token run on the base model. And here’s the kicker: in tests, CAMELoT improved perplexity by 30% on Llama 2-7B. Less memory. Better answers.

The Three Key Techniques That Actually Work

Not all memory optimization is created equal. Three approaches have proven effective in real deployments:

- Dynamic Memory Sparsification (DMS) - Developed by the University of Edinburgh and tested on models from 7B to 70B parameters, DMS removes less important tokens during inference. But it doesn’t just delete them. It lets their information transfer to remaining tokens before they’re evicted. In benchmarks, it cut memory usage by 47% on average with just 0.8% accuracy loss on GLUE tasks. Users on Reddit reported dropping a 13B model’s memory footprint from 26GB to 15GB on a 24GB VRAM card.

- CAMELoT - This isn’t a quantization trick. It’s a memory architecture plug-in. It works with existing models like Llama, Mistral, or Phi. It stores key contextual information in an external associative memory module. The model learns which tokens to retain based on three rules: consolidation (repeated concepts), novelty (new info), and recency (recent context). It’s been shown to improve accuracy on long-context benchmarks like LongMemEval by over 10%. CAMELoT 2.0, released in January 2026, cuts memory further by 15% and handles reasoning chains better.

- Larimar - For models that need to remember facts during inference-like customer support bots or legal assistants-Larimar adds an external episodic memory. Think of it like a live database attached to the LLM. You can add, update, or delete facts in seconds without retraining. IBM’s tests showed a 92% reduction in memory leakage during adversarial attacks. One engineer on GitHub said it let them run a 20B model on a single A100 40GB instead of needing two GPUs.

Each technique has trade-offs. DMS adds slight latency because it has to evaluate tokens in real time. CAMELoT requires deep integration into the inference pipeline and took some teams three weeks to implement. Larimar needs an external memory service (like Redis) and adds network overhead. But none of them sacrifice accuracy. That’s what sets them apart from quantization.

What You Shouldn’t Do

Some teams still try to brute-force their way through OOM errors. They reduce context length. They split inputs into chunks. They lower batch size to 1. These work-but they’re hacks. They break user experience. A customer asking for a 10-page contract summary shouldn’t get a 2-page answer because your system can’t handle the context. And splitting context? That kills coherence. The model forgets what was said in section one by the time it gets to section five.

Quantization alone isn’t enough anymore. While 4-bit quantization reduces memory by 75%, it often hurts performance on reasoning-heavy tasks. A 2024 Stanford AI Lab study found that for models under 7 billion parameters, quantization still wins on cost. But for anything larger? Memory planning beats it every time-especially when accuracy matters.

Real-World Deployment: What Teams Are Doing Now

Most teams don’t pick one technique. They combine them. A common setup in 2026:

- Use 4-bit quantization on model weights to reduce baseline memory.

- Apply Dynamic Memory Sparsification to activation tensors during inference to cut memory from long contexts.

- Add Larimar’s external memory for facts that change frequently-like user profiles, product specs, or regulatory updates.

RunPod’s Q4 2025 survey of 15 tech companies found that 89% of teams now use hybrid memory planning. The average memory reduction? 52%. The average latency increase? Just 12%. That’s a win. One financial services firm reduced their GPU fleet from 12 to 4 by switching from pure quantization to CAMELoT + DMS. Their inference cost dropped 68%.

Implementation isn’t easy. Documentation for CAMELoT is sparse. DMS libraries are still mostly academic. But community resources are growing fast. The Redis blog’s guide on managing short-term and long-term memory hit 12,500 views in its first month. GitHub repositories for memory optimization tools now average a 4.2/5 rating. DMS tools score highest in effectiveness (4.5/5) but lowest in ease of use (3.8/5).

What’s Next: The Future of Memory in LLMs

By 2028, Forrester predicts all major foundation models will have memory optimization built in. IBM’s CAMELoT 2.0 and the University of Edinburgh’s upcoming open-source DMS release (Q2 2026) are early signs. The EU AI Office already requires documentation of memory modifications that affect outputs. That means memory planning isn’t just a technical fix-it’s becoming a compliance issue.

For teams deploying LLMs today, the choice is clear: keep adding GPUs, or fix the memory problem. The data doesn’t lie. Memory planning cuts costs, improves accuracy, and lets you run larger models on smaller hardware. It’s not magic. But it’s the only real solution we have.

What causes OOM errors in LLM inference?

OOM errors happen because the transformer’s self-attention mechanism requires memory that grows quadratically with input length. For a 32,000-token prompt, the model needs to store and compute over 1 billion attention pairs. Most GPUs simply don’t have enough VRAM for this, even high-end ones like the A100 80GB. The issue gets worse with batched requests and longer context windows.

How does CAMELoT reduce memory without losing accuracy?

CAMELoT adds an associative memory module that doesn’t replace the model’s weights-it enhances how context is stored. It prioritizes tokens based on three neuroscience-inspired rules: consolidation (repeated ideas), novelty (new information), and recency (recent context). By keeping only the most relevant tokens and compressing their representation, it reduces memory use by 40-60% while improving prediction accuracy. In tests, it reduced perplexity by 30% on Llama 2-7B.

Is quantization enough to solve OOM problems?

For small models under 7 billion parameters, 4-bit quantization can be enough. But for models like Llama 3 70B or GPT-4, quantization alone cuts memory but often degrades reasoning, summarization, and factual accuracy by 5-15%. Memory planning techniques like CAMELoT and Dynamic Memory Sparsification reduce memory further while improving accuracy, making them better long-term solutions.

Can I use these techniques with Hugging Face models?

Yes, but it requires code changes. CAMELoT and DMS aren’t plug-and-play. You need to modify the inference pipeline to insert memory modules. Some open-source implementations exist on GitHub, but they often require adapting to your framework. The Hugging Face Transformers library doesn’t include them natively yet, but community forks are actively working on integration.

Do I need extra hardware for Larimar’s external memory?

Yes. Larimar relies on an external key-value store, typically Redis or a similar fast database, to store and update episodic facts. This adds network latency, but it’s often worth it if your model needs to remember user-specific data, product details, or real-time updates. The trade-off is lower memory usage on the GPU and the ability to update facts without retraining.

How long does it take to implement memory planning?

Most teams report 2-4 weeks of engineering work to integrate these techniques into their pipeline. CAMELoT integration is the most complex, often requiring changes to the attention mechanism and model output hooks. DMS is easier to add but can introduce latency. Teams with experienced ML engineers (6+ months of LLM work) usually complete integration faster. Documentation gaps remain a common bottleneck.

10 Comments

Alan Crierie

Just wanted to say CAMELoT is wild. I tried it on a 13B model last week and it cut my VRAM use from 24GB to 11GB without dropping accuracy. 🤯

Also, the fact that it improves perplexity? Mind blown. I thought memory tricks always cost something.

Big thanks to the team behind this. Reddit needs more posts like this.

Nicholas Zeitler

Okay, I just have to say-this is the most well-researched, clearly written, and genuinely useful post I’ve read all year.

Not just the tech, but the way you laid out the trade-offs? Perfect.

Also, the fact that you called out quantization as a band-aid? YES.

Thank you. Thank you. Thank you.

Teja kumar Baliga

Bro this is gold. I’m from India and we’re trying to deploy LLMs on tiny cloud instances-this is literally our life.

DMS + 4-bit quantization saved us from renting 3x GPUs. Now we run 70B on one A100.

Also, Larimar? We’re using Redis for user profiles. Works like magic. 🙌

k arnold

So you’re telling me… we’re not just doing AI anymore-we’re doing memory management? Wow. Groundbreaking.

Next up: using a spreadsheet to track which tokens are ‘important.’

Meanwhile, I’ll be over here just turning off the GPU and going for a walk.

Kenny Stockman

I’ve been running CAMELoT on our customer support bot for a month now.

Before: 30% of queries got cut off. After: full responses, no crashes, and the QA score went up.

Yeah, it took a few days to integrate. But honestly? Worth every hour.

Also, the fact that it improved accuracy? That’s the real win.

Stop just throwing GPUs at the problem. This is the future.

Jeff Napier

Memory planning? Sounds like they just moved the problem from VRAM to Redis.

Who’s to say the ‘important’ tokens aren’t just the ones that fit the model’s bias?

And why is no one talking about how this is just glorified caching?

Next thing you know, they’ll patent ‘context’ and charge per token.

Also, ‘neuroscience-inspired’? Please. It’s just a fancy lookup table.

They’re selling snake oil with a whitepaper.

Sibusiso Ernest Masilela

You call this innovation? Pathetic.

Real engineers don’t need ‘memory modules’-they write better code.

And yet here you are, begging for a 40GB A100 like a toddler with a toy car.

CAMELoT? Sounds like a startup that got funded because someone said ‘attention’ in a TED talk.

This isn’t progress. It’s desperation dressed in IEEE jargon.

Go build something real. Not a memory patch.

Daniel Kennedy

Jeff above is being dramatic, but he’s not wrong about one thing-this isn’t magic.

It’s engineering. Hard, messy, painstaking engineering.

And yeah, it’s not plug-and-play. You need to understand your pipeline, your data, your latency tolerance.

But if you’re deploying LLMs at scale? You don’t have a choice.

This isn’t about ‘fixing’ memory-it’s about rethinking how models interact with context.

And honestly? It’s the most exciting thing happening in inference right now.

Taylor Hayes

Just wanted to add-CAMELoT + DMS combo worked wonders for us on legal document summarization.

Before: 12-page contracts got summarized into 3 lines.

After: full context preserved, accuracy up 11%, and we cut GPU costs by 60%.

Also, Larimar for client-specific rules? Game-changer. We update facts daily without retraining.

It’s not sexy. But it works.

And for anyone thinking ‘I’ll just quantize’-try it on a 70B model with 64K context. You’ll cry.

Sanjay Mittal

One sentence: Use DMS + 4-bit + Larimar. No exceptions.