When you're deciding which large language model (LLM) to use, it's easy to get fooled by raw performance numbers. That shiny new model with 89% accuracy on MMLU benchmarks sounds perfect-until you check the price tag. Suddenly, you're paying 5x more than another model that does almost the same job. This isn't about being cheap. It's about performance per dollar-and finding the elbow in the curve where spending more gives you almost nothing back.

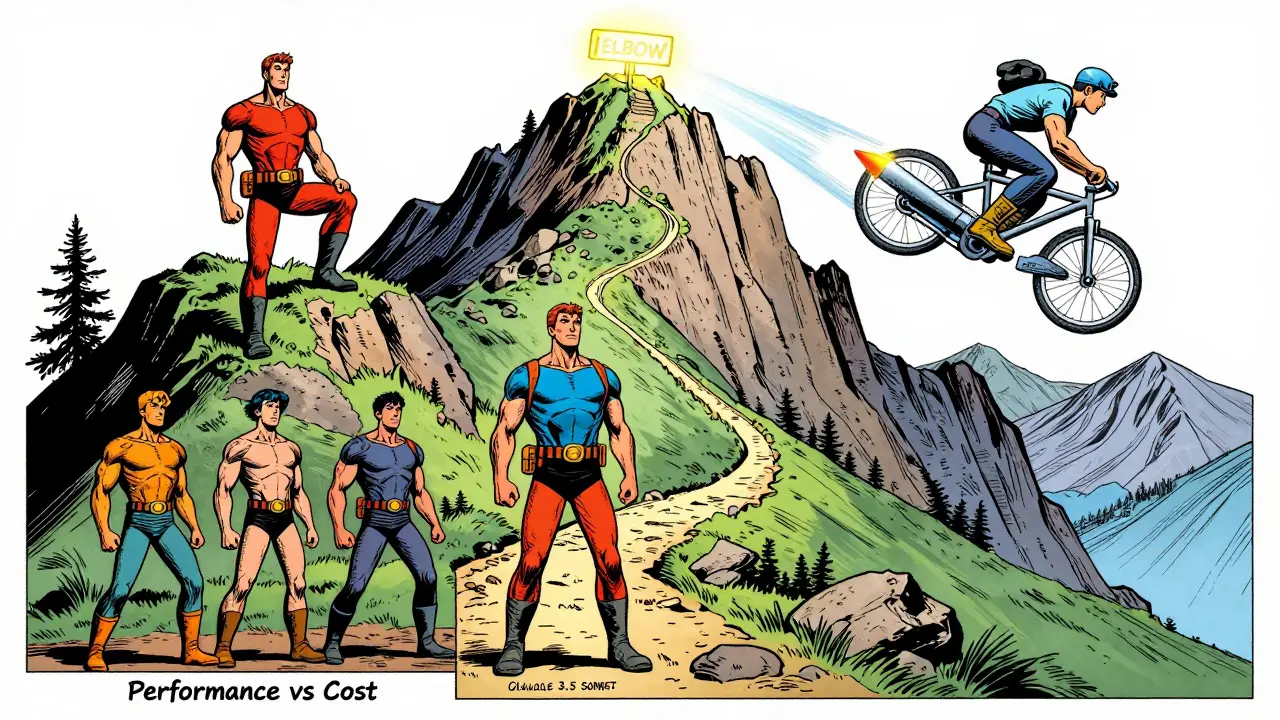

What the Elbow Really Means

Think of the performance vs cost curve like a hiking trail. You start at the bottom, where models are cheap but weak. As you climb, performance improves, but so does the price. At some point, the trail flattens out. That’s the elbow. Beyond it, you’re not gaining much more ability-but you’re paying a lot more. In early 2026, the clearest elbow was between Claude 3.5 Sonnet and Claude 3 Opus. Both scored around 86-88% on MMLU, a standard benchmark for general knowledge. But Sonnet cost $6 per million tokens. Opus? $30. That’s a 5x price jump for less than 2% more accuracy. If you don’t need cutting-edge reasoning, you’re throwing money away. That’s not a premium-it’s a trap.The Numbers Don’t Lie (But They Can Mislead)

Let’s break down the real players in early 2026:| Model | MMLU Score | Cost per 1M Tokens | Performance-per-Dollar Index | Value Tier |

|---|---|---|---|---|

| Claude 3.5 Sonnet | 88.7% | $6.00 | 14,783 | Exceptional |

| GPT-4o | 88.4% | $7.50 | 11,787 | Excellent |

| GPT-4 Turbo | 86.4% | $15.00 | 5,760 | Good |

| Claude 3 Opus | 86.8% | $30.00 | 2,893 | Premium |

| DeepSeek R1 | 87.1% | $0.65 | 134,000 | Exceptional |

| Llama 3.2 3B | 42% | $0.06 | 700 | Commodity |

Notice something? Claude 3.5 Sonnet and GPT-4o are nearly identical in performance. But Sonnet is 20% cheaper. That’s not a coincidence-it’s a strategic pricing move. OpenAI kept GPT-4o at a premium, betting on brand loyalty. Anthropic undercut them, knowing most users don’t need that extra 0.3%.

And then there’s DeepSeek R1. At $0.65 per million tokens, it’s 40x cheaper than OpenAI’s o1 model. Same quality. Same speed. That’s not just a bargain-it’s a market shock. It forced everyone else to rethink their pricing. If you’re running customer support bots or internal documentation tools, DeepSeek isn’t just an option. It’s the default.

Costs Are Plummeting-Faster Than You Think

Back in 2021, running a GPT-3-level model cost $60 per million tokens. By 2025, that same performance dropped to $0.06. That’s a 1,000x drop in just four years. But here’s the kicker: the biggest drops didn’t happen evenly. After January 2024, price declines jumped from an average of 50x per year to 200x. Some categories, like basic reasoning and text generation, saw 900x annual drops. That’s not linear. It’s exponential. And it’s not slowing down. Why? Because compute power is becoming a commodity. Cloud providers are flooding the market with AI-optimized chips. Open-source models are getting better every month. And companies like DeepSeek, Meta, and Mistral aren’t playing by the old rules-they’re undercutting to capture market share.

Don’t Just Look at Price Per Token

If you’re only comparing per-token costs, you’re missing half the picture. A model might be cheap, but if it hallucinates on medical facts or fails your internal QA tests, you’re losing money. Performance-per-dollar only works if performance actually matters to your use case. Think about this: MMLU measures general knowledge. But what if you’re in finance? You need to parse SEC filings. What if you’re in legal? You need to cite case law. A model that scores 88% on MMLU might score 52% on your real-world task. That’s why pilot programs matter. Test models on your data. Measure accuracy, latency, and output consistency-not just benchmarks. Also, factor in hidden costs. Streaming responses? Batch processing? Token caching? Prompt compression? These can cut your token usage by 30-60%. A $6 model that eats 10x more tokens because of poor architecture might cost more than a $15 model that’s optimized for your workflow.Where Should You Draw the Line?

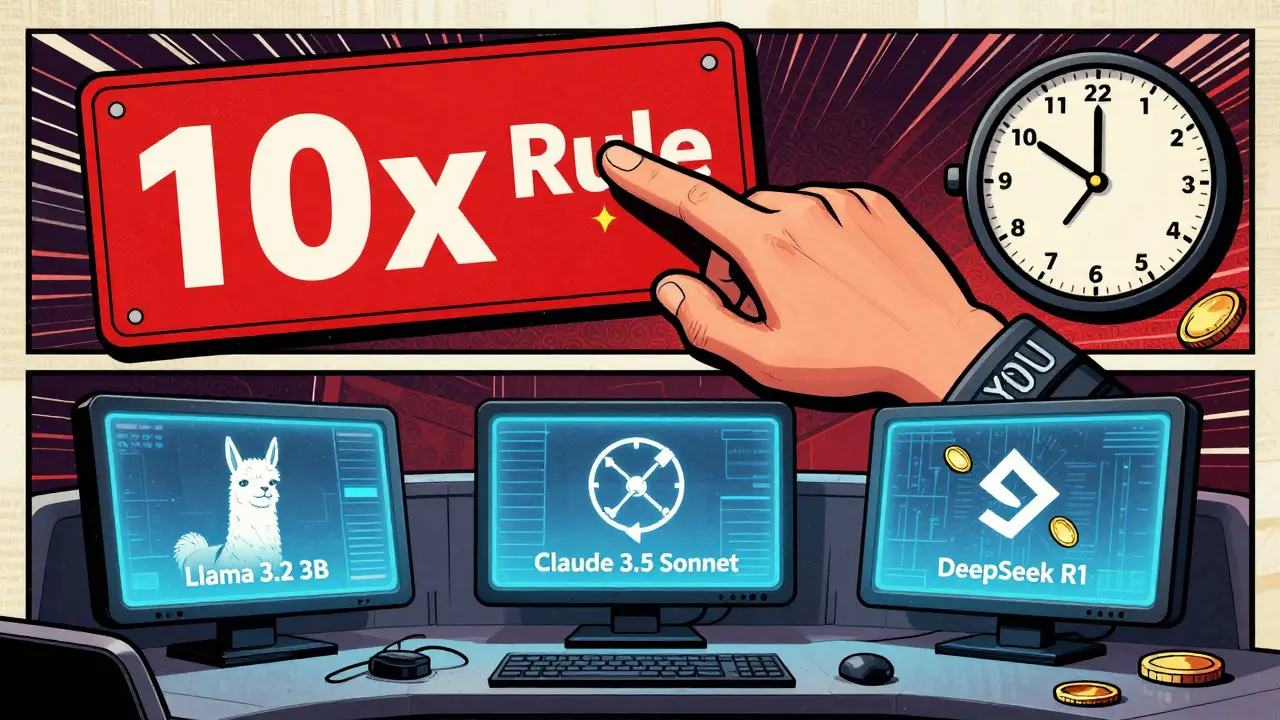

Here’s how to find your elbow:- Define your minimum performance threshold. Do you need 85% accuracy? 80%? 70%? Most companies don’t need 88%.

- Find the cheapest model that meets it. For 42%+ performance? Llama 3.2 3B at $0.06. For 85%+? Claude 3.5 Sonnet at $6. For 87%+? Still Claude 3.5 Sonnet-GPT-4o doesn’t justify the 25% cost bump.

- Compare the next tier up. Is the jump worth it? Claude 3 Opus? No. GPT-4 Turbo? Only if you’re locked into OpenAI’s ecosystem.

- Build a hybrid stack. Use Llama 3.2 for low-stakes tasks. Use Claude 3.5 Sonnet for high-accuracy work. Keep DeepSeek as a backup if pricing shifts.

The most successful teams in 2026 aren’t chasing the “best” model. They’re using the right model for the job. One company reduced its LLM costs by 87% by switching from GPT-4 Turbo to DeepSeek R1 for internal chatbots-without a single complaint from users.

What’s Coming Next?

By late 2026, frontier models like Claude 3.5 Sonnet and GPT-4o could drop below $1 per million tokens. That’s not speculation-it’s math. If prices keep falling at 200x per year, today’s $6 model becomes $0.03 in 12 months. But here’s the twist: while general models commoditize, specialized ones will rise. Legal, medical, and financial models trained on proprietary data will charge $1-$50 per million tokens. Why? Because they’re not competing on price-they’re competing on trust, accuracy, and compliance. So your next investment decision shouldn’t be: “Which model is best?” It should be: “What performance level do I actually need-and what’s the cheapest way to get it without breaking my application?”Final Rule: The 10x Rule

If a model is more than 10x more expensive than the next best performer with similar results, walk away. That’s not premium pricing. That’s overpaying for hype. The elbow isn’t just a curve. It’s a signal. And if you’re not listening to it, you’re leaving money on the table.What is the Performance-per-Dollar Index?

The Performance-per-Dollar Index is a metric that divides a model’s benchmark performance score (like MMLU) by its cost per million tokens. For example, a model scoring 88.7% on MMLU at $6 per million tokens has an index of 14,783. Higher numbers mean better value. It helps you compare models fairly, not just by price or performance alone.

Is Claude 3.5 Sonnet really the best value?

As of early 2026, yes-for most general-purpose use cases. It matches or exceeds GPT-4o’s performance at 20% lower cost. Its main advantage is consistency, speed, and low latency. If you’re not doing highly specialized reasoning, it’s the optimal balance of price and capability.

Should I use open-source models like Llama 3.2 3B?

Absolutely-if your needs are basic. Llama 3.2 3B delivers GPT-3 level performance at 1/100th the cost of top-tier models. It’s ideal for chatbots, content summarization, tagging, and internal tools where accuracy above 40% is sufficient. Just don’t use it for medical or legal advice without heavy validation.

Why is DeepSeek R1 so much cheaper than OpenAI’s models?

DeepSeek is a Chinese AI research group that prioritizes market share over margins. They’ve optimized their training and inference pipelines for efficiency and are willing to lose money on per-token pricing to gain adoption. Their infrastructure is also built on cheaper hardware, and they don’t carry the same brand premium as OpenAI.

How often should I re-evaluate my LLM choice?

Every 3-6 months. Prices and performance change faster than ever. A model that was exceptional in January might be obsolete by June. Set up automated tracking of cost-per-task and accuracy on your key workflows. Don’t wait for a budget cycle-let data drive your decisions.