Tag: AI fairness

Tamara Weed, Mar, 12 2026

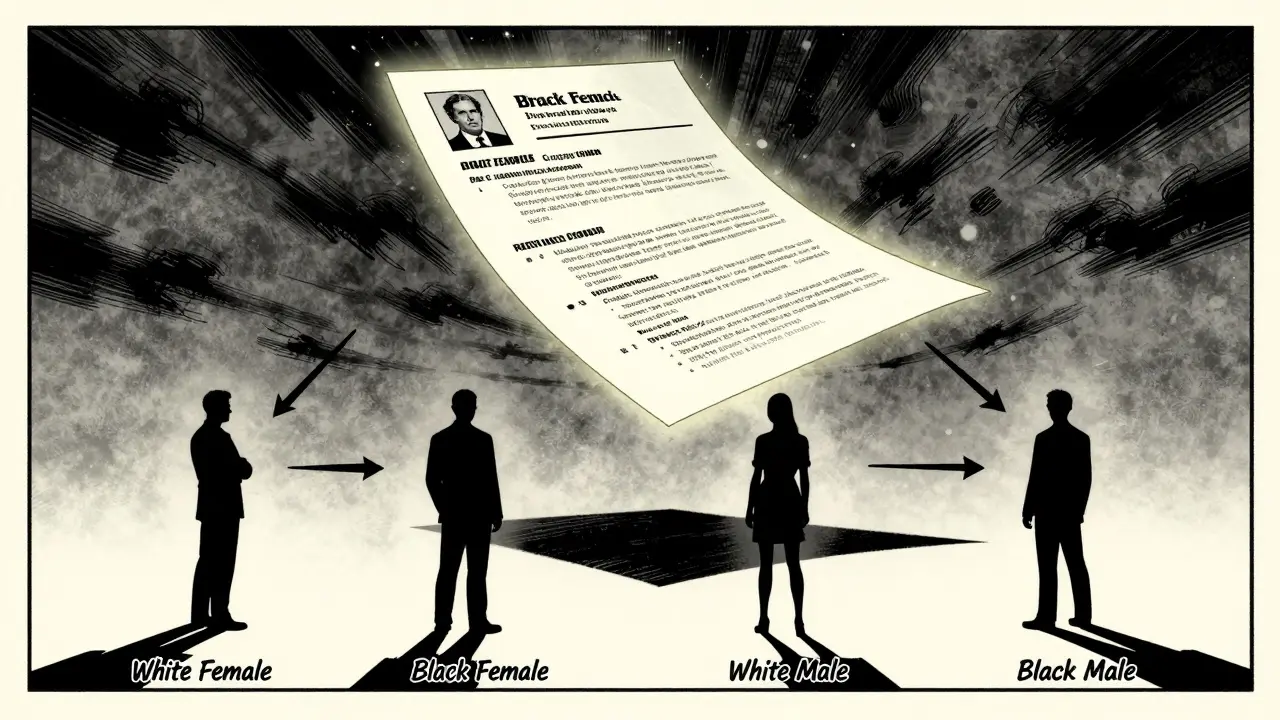

Large language models show measurable gender and racial bias in hiring and decision-making, favoring white women while penalizing Black men. Real-world testing reveals persistent, intersectional bias that current debiasing methods fail to fix.

Categories:

Tags:

Tamara Weed, Feb, 9 2026

Bias drift in production LLMs can lead to discrimination, legal risk, and brand damage. Learn how to monitor key fairness metrics, choose the right tools, and avoid common pitfalls in 2026.

Categories:

Tags: