Tag: prompt restrictions

Model Access Controls: Who Can Use Which LLMs and Why

Tamara Weed, Jan, 29 2026

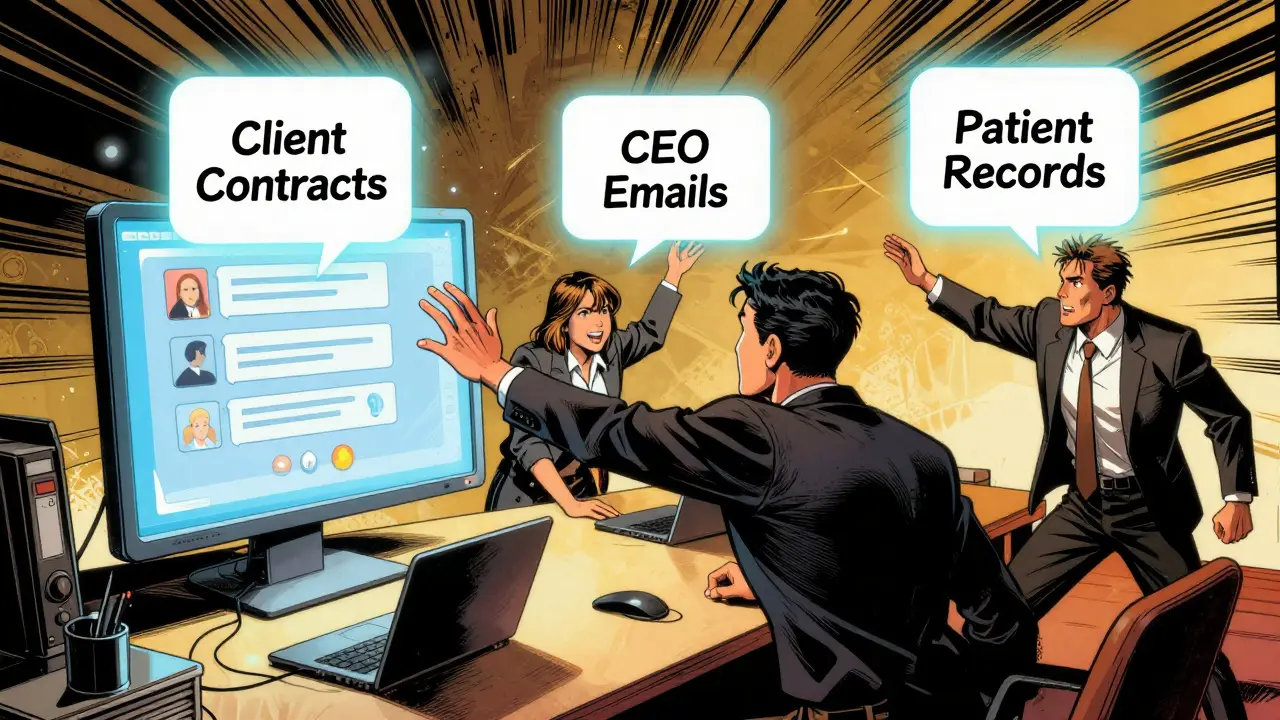

Model access controls determine who can use which LLMs and what they can ask for. Without them, companies risk data leaks, compliance violations, and security breaches. Learn how RBAC, CBAC, and AI guardrails protect sensitive information.

Categories:

Tags: