There is a growing gap between how smart we want our AI to be and how much we can afford to run it. For years, the rule was simple: if you wanted a smarter model, you built a bigger one. But as models hit hundreds of billions of parameters, that approach stopped making financial sense. This is where Mixture-of-Experts (MoE) architectures come in. They offer a way to build massive models without paying for massive compute costs on every single query.

By May 2026, MoE has moved from academic curiosity to industry standard. Models like DeepSeek-v3 and Grok are proving that you don't need to activate every parameter to get high-quality results. You just need the right ones. But this efficiency comes with tradeoffs. Understanding these tradeoffs is crucial for anyone building or deploying large language models today.

How Mixture-of-Experts Works

In a traditional dense model, every token you input passes through every neuron in the network. It’s like asking an entire team of specialists to review every single sentence you write. It ensures nothing gets missed, but it’s incredibly slow and expensive. MoE changes this dynamic by introducing sparsity.

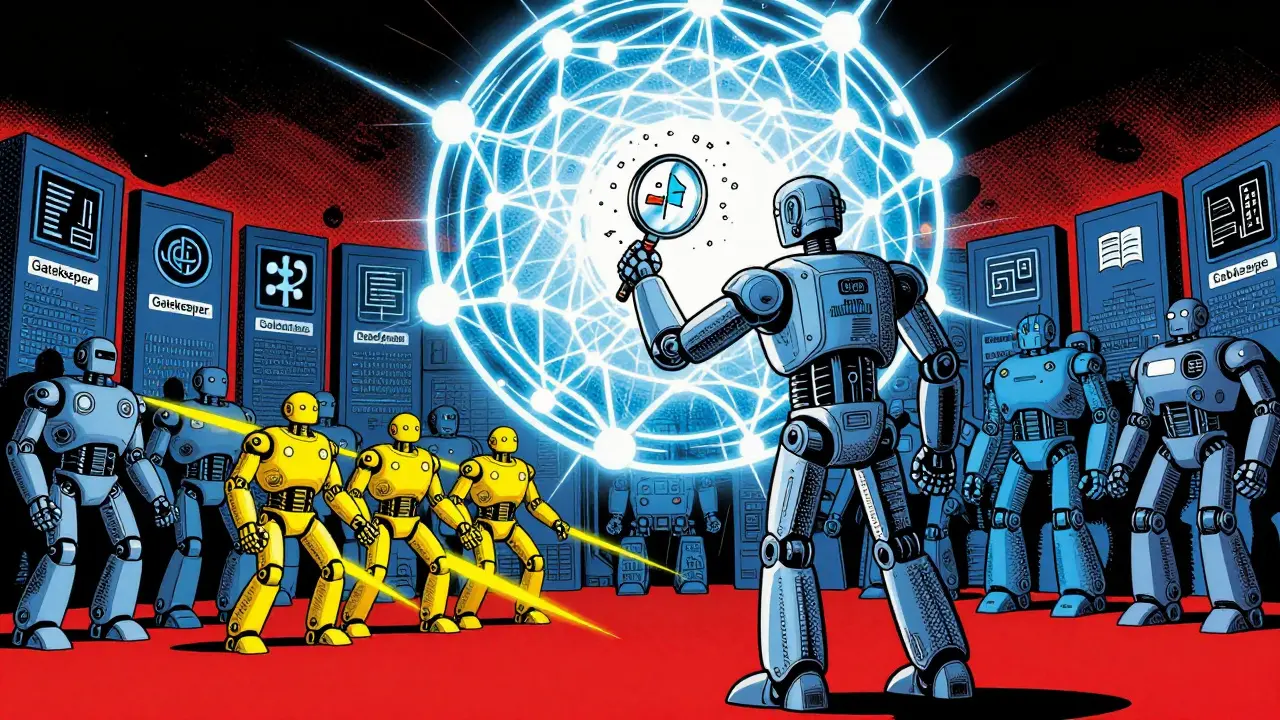

Imagine instead that you have a large pool of specialists-experts-and a gatekeeper. When you send a token to the model, the gatekeeper decides which specialist is best suited for that specific piece of information. Maybe one expert is great at coding, another at creative writing, and a third at mathematical reasoning. Only those selected experts process the token. The rest stay idle.

This mechanism relies on four core components:

- Expert Subnetworks: Smaller neural networks specialized in different tasks or domains.

- Gating Network: A learned mechanism that scores each expert and selects the top k experts for a given token.

- Sparse Activation: Only the selected experts perform computation, drastically reducing FLOPs (floating-point operations).

- Aggregation: The outputs from the active experts are combined to form the final output for the token.

The result is a model that looks huge on paper but acts small in practice. Take the Mixtral model, for example. It contains 47 billion total parameters, but only 13 billion are active during any forward pass. That means you get the capacity of a large model with the speed of a smaller one.

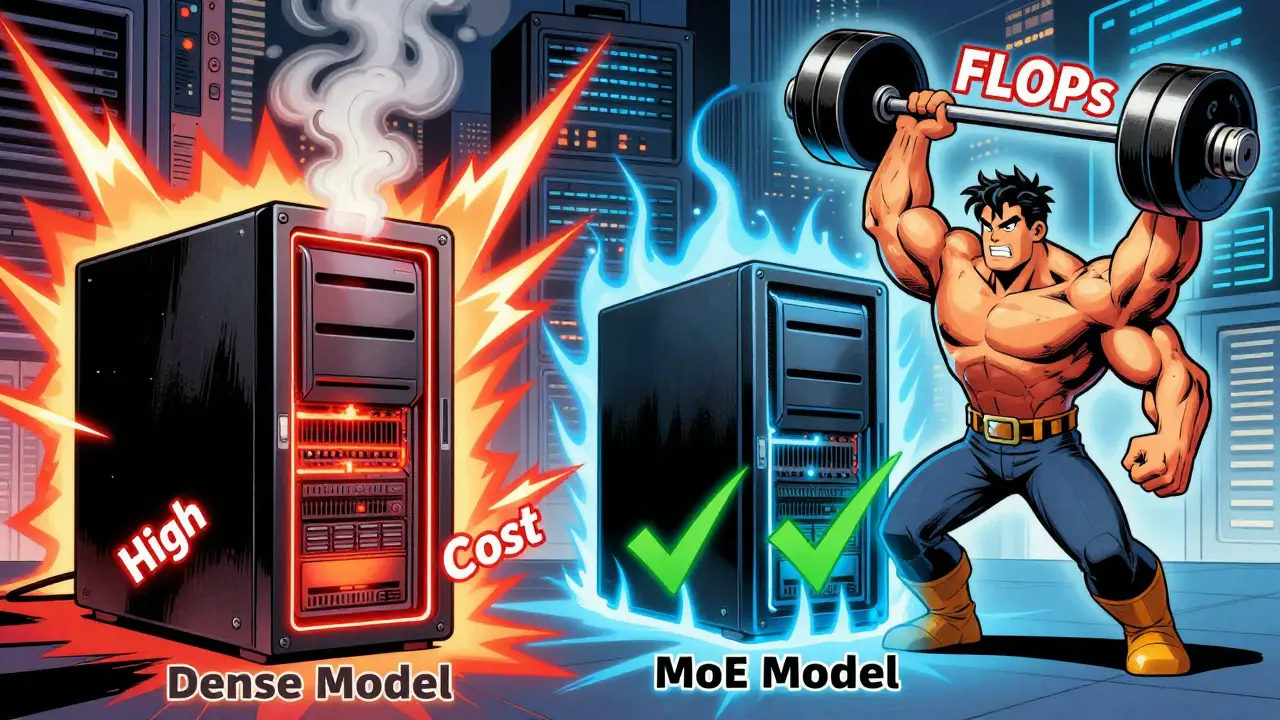

The Cost Advantage: Why Compute Savings Matter

The primary driver for adopting MoE is cost efficiency. Empirical studies from 2025 show that MoE models deliver 4 to 16 times compute savings at matched perplexity compared to dense models. This isn’t just a marginal improvement; it’s a fundamental shift in economics.

During training, the Switch Transformer demonstrated a 7x pretraining speedup using MoE architecture. More recently, DeepSeek-v3 utilized a novel FP8 mixed precision training framework. This allowed them to train a massive model for approximately $5.6 million-a fraction of what similar dense models would cost. By using 8-bit training successfully, they proved that high precision isn’t always necessary for high performance.

At inference time, the benefits are even more direct. Because fewer parameters are activated, latency drops, and throughput increases. If you are running a service where thousands of users query your model simultaneously, MoE allows you to handle higher batch sizes without crushing your hardware. You aren’t paying for unused brain power.

| Feature | Dense Model | MoE Model |

|---|---|---|

| Parameter Usage | All parameters active per token | Subset of experts active per token |

| Compute Cost | High (scales linearly with size) | Low (sparse activation) |

| Memory Storage | Total parameters stored | Total parameters stored (often larger than dense) |

| Training Complexity | Standard optimization | Requires load balancing and routing stability |

| Scalability | Limited by compute budget | Can scale to trillions of parameters |

The Hidden Costs: Memory and Routing Overhead

If MoE is so efficient, why isn’t everyone using it? The answer lies in the hidden costs. While computation is sparse, memory is not. In a MoE model, you still need to store all the parameters for all the experts in VRAM, even if you only use a few at a time.

Consider a model with eight experts, each having 7 billion parameters. That’s 56 billion parameters total. Even if only 13 billion are active, your GPU needs to hold the full 56 billion in memory. This creates a bottleneck. You might save on compute cycles, but you’re forced to buy more expensive memory-heavy hardware to fit the model.

Then there’s the routing overhead. The gating network must make a decision for every single token. This adds computational complexity. For very small models or simple tasks, the cost of deciding which expert to use might actually outweigh the savings from using a smaller expert. It’s like spending ten minutes choosing a restaurant just to eat a five-minute snack.

Additionally, distributed training introduces communication costs. When tokens are routed to different experts located on different machines, data must move across the network. This can create bottlenecks that don’t exist in dense models, where all data stays local to the processing unit.

Quality Tradeoffs: Specialization vs. Generalization

One of the promises of MoE is that experts will specialize, leading to better performance in specific domains. And often, they do. Different experts can focus on different languages, coding styles, or factual domains. However, this specialization can lead to fragmentation.

If the gating mechanism isn’t calibrated correctly, some experts might become “dead” (never selected), while others become overloaded. This imbalance hurts the model’s overall quality. Recent research emphasizes the need for accurate calibration and reliable inference aggregation. Systems like HyperMoE attempt to solve this by aggregating intermediate signals from unselected experts, refining predictions without adding runtime cost.

Furthermore, fine-tuning MoE models is trickier than dense ones. Domain adaptation can expose optimization mismatches. You might find that a MoE model performs worse than a dense model when trying to adapt it to a niche task because the experts haven’t learned to collaborate effectively on that new data. Sample efficiency can be weaker, meaning you need more data to achieve the same level of specialization.

Recent Advances: Compression and Quantization

To address the memory and routing challenges, researchers have developed sophisticated compression techniques. One notable advancement is Expert-Selection Aware Compression (EAC-MoE), published in August 2025 by Chen et al. This method couples quantization-aware router calibration to prevent “expert-shift,” a phenomenon where the distribution of selected experts changes unpredictably after compression.

EAC-MoE allows for the pruning of low-frequency experts based on observed routing patterns. The result? A reduction in memory usage by 4 to 5 times and a throughput improvement of 1.5 to 1.7 times, all while keeping accuracy losses below 1.25 percent. This makes MoE viable on hardware that previously couldn’t support such large parameter counts.

Another key development is the integration of MoE with improved attention mechanisms. DeepSeek-v3 combines MoE sparsity with Multi-head Latent Attention (MLA). MLA uses low-rank joint projection to represent key and value vectors with smaller latent vectors. This achieves over a 93 percent reduction in KV cache size compared to a 67 billion parameter dense model. Less cache means faster inference and lower memory pressure.

Who Should Use MoE?

MoE is not a silver bullet. It requires careful tuning and robust infrastructure. Here is a quick guide to help you decide:

- Use MoE if: You are scaling to trillion-parameter models, need high throughput for large batch sizes, or have significant memory resources available. Organizations with strong distributed training expertise will benefit most.

- Avoid MoE if: You are working with limited memory, need rapid prototyping, or are focusing on small-scale tasks where routing overhead dominates. Dense models remain simpler and more predictable for these scenarios.

For most enterprises, the future lies in hybrid approaches. Using MoE for the heavy lifting in general-purpose models while maintaining dense heads for specialized, low-latency tasks. As tools like EAC-MoE mature, the barrier to entry will lower, making this architecture accessible to a broader range of developers.

What is the main difference between MoE and dense models?

In dense models, every parameter is used for every token. In MoE models, only a subset of parameters (experts) are activated for each token, reducing compute cost but requiring more memory to store the full model.

Does MoE always improve performance?

Not necessarily. MoE excels in scaling capacity and reducing compute costs for large models. However, it can suffer from routing overhead, memory constraints, and training instability, which may hurt performance in small-scale or specialized tasks.

Why is memory a bottleneck for MoE?

Even though only a few experts are active, the entire set of expert parameters must be loaded into VRAM. This means MoE models often require more memory than their dense counterparts, despite using less compute.

What is Expert-Selection Aware Compression (EAC-MoE)?

EAC-MoE is a technique that compresses MoE models by pruning low-frequency experts and calibrating routers to prevent performance degradation. It reduces memory usage by up to 5x with minimal accuracy loss.

Is MoE suitable for fine-tuning?

Fine-tuning MoE models is more complex due to potential optimization mismatches and load balancing issues. It often requires more data and careful hyperparameter tuning compared to dense models.