You might think that giving an AI model more information is always better. If you can feed it a whole book instead of a chapter, shouldn't it give you a smarter answer? It sounds logical. But in the world of large language models (LLMs), that logic often breaks down. In fact, dumping massive amounts of text into a model’s context window can actually make its answers worse.

This isn’t just a minor glitch; it’s a fundamental limit in how these systems work. As we move into 2026, with models claiming they can handle millions of tokens, there is a growing gap between what models *can* process and what they can *use* effectively. The key to getting good results isn’t just throwing more data at the wall-it’s understanding where the sweet spot lies.

The Myth of Infinite Context

We’ve seen headlines about models like Llama 4 Scout boasting context lengths of up to 10 million tokens. That’s roughly 15,000 pages of text or an entire software codebase. On paper, this seems like a superpower. You could theoretically ask the model to find a specific bug in a massive repository without breaking the code into chunks.

However, capacity does not equal capability. Just because a model *accepts* 10 million tokens doesn’t mean it processes them all with equal precision. Research shows that simply increasing context length does not proportionally improve output quality. In many cases, performance degrades as the context grows larger. This happens even when the extra information is relevant and helpful.

The problem stems from a tension between capacity and performance. Larger context windows allow for more complex inputs, but they also introduce noise. The model has to sift through a haystack to find the needle, and the bigger the haystack, the harder it becomes to keep the needle in focus. This leads us to a critical concept: the difference between maximum context length and effective context length.

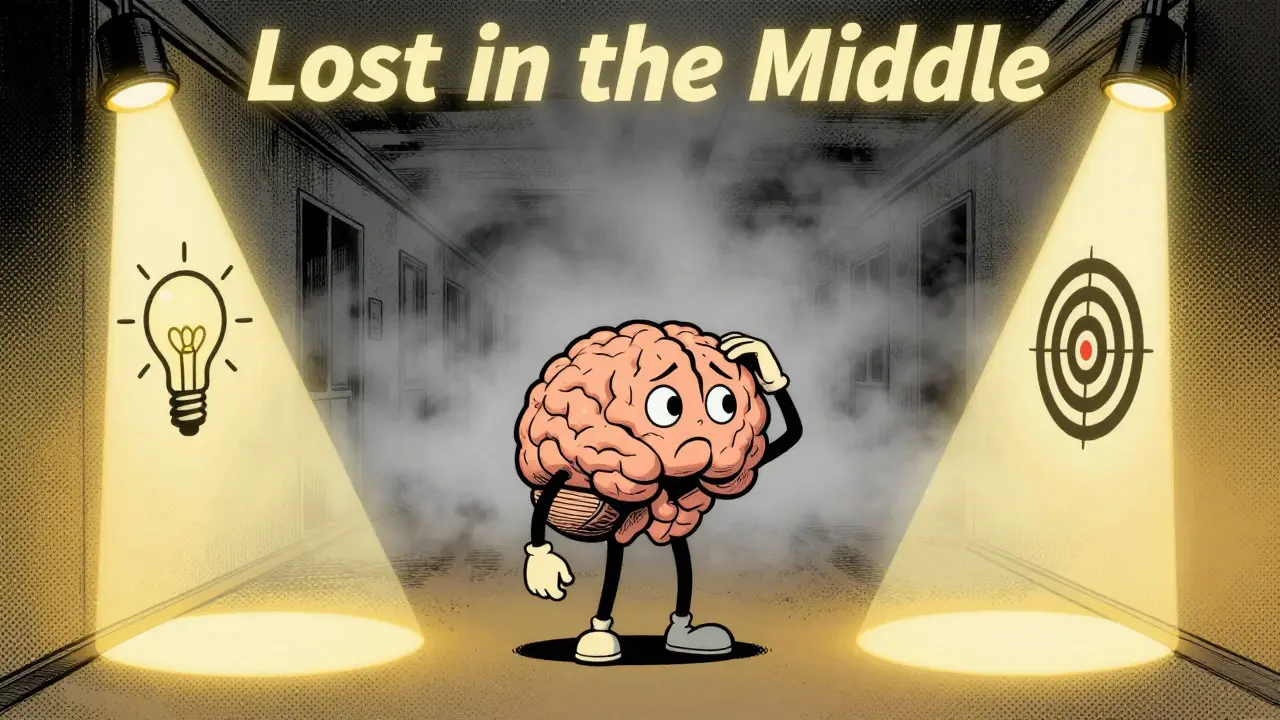

Effective Context vs. Maximum Capacity

Effective context length is the amount of usable input beyond which a model’s performance begins to drop. This number is often significantly shorter than the marketing claims suggest. For example, while some models claim support for 128k or even 1 million tokens, their effective context for complex reasoning tasks might be much lower.

Studies examining long-context performance across retrieval, variable tracking, and question-answering tasks reveal that models saturate quickly. When tested on datasets like Natural Questions, different architectures hit their limits at different points:

- GPT-4-Turbo and Claude-3-Sonnet tend to experience saturation around 16k tokens.

- Mixtral-Instruct hits its ceiling closer to 4k tokens.

- DBRX-Instruct performs well up to 8k tokens before declining.

This means that if you are using GPT-4-Turbo for a task requiring deep reasoning, feeding it 100k tokens of background info won’t help. In fact, it will likely confuse the model. The optimal range for many high-quality outputs sits between 2k and 32k tokens, depending on the specific architecture and task.

The "Lost in the Middle" Phenomenon

One of the most frustrating issues users face is the "Lost in the Middle" effect. Language models struggle to retrieve information positioned in the middle of long input contexts. They remember the beginning and the end quite well, but the middle tends to blur together.

Controlled experiments with models like MPT-30B-Instruct, LongChat-13B, and earlier versions of GPT and Claude demonstrated this clearly. When prompted with longer contexts, accuracy drops for facts buried in the center of the document. This creates a trade-off: providing more information helps cover more ground, but it increases the cognitive load on the model, potentially decreasing accuracy for specific queries.

If you are building a system that relies on extracting specific details from long documents, you need to account for this bias. Placing critical instructions or key data at the very start or very end of your prompt often yields better results than scattering them throughout the text.

Attention Dilution and Noise

The technical reason behind these failures is often called attention dilution. In transformer-based models, attention mechanisms weigh the importance of different words in the input sequence. As the context length increases, the "attention budget" is spread thinner across more tokens.

Even if the retrieval mechanism is perfect-meaning the right information is present-the sheer volume of context can negatively impact capabilities in reasoning, coding, and question-answering. Recent findings from EMNLP 2025 confirmed that context length alone acts as a negative factor, independent of whether the information is distracting or not. The model simply gets overwhelmed by the scale of the input.

This shifts the industry focus from celebrating raw input capacity to scrutinizing "reasoning-over-context" capabilities. It is relatively easy to design a model that accepts 10 million tokens. It is much harder to ensure that model can reliably retrieve a specific fact from that mass without hallucinating or suffering from latency spikes.

Impact on RAG and Production Systems

For enterprises using Retrieval-Augmented Generation (RAG), these limitations have real-world consequences. A common mistake in production systems is adding more documents to the context window under the assumption that more sources equal better answers. Often, the opposite happens.

RAG applications frequently observe performance saturation or degradation as more documents are added. The model starts to contradict itself or miss key points because the signal-to-noise ratio drops. Similarly, long chain-of-thought sequences don’t always improve output. Sometimes, forcing a model to generate excessive reasoning steps hurts performance rather than helping it.

To optimize this, organizations must carefully engineer both context selection and presentation. Instead of dumping raw data, use summarization techniques to condense information before feeding it to the LLM. Keep the context tight, relevant, and structured.

| Model | Claimed Max Context | Effective Saturation Point | Best Use Case |

|---|---|---|---|

| GPT-4-Turbo | 128k+ | ~16k tokens | Complex reasoning, coding |

| Claude-3-Sonnet | 200k+ | ~16k tokens | Document analysis, writing |

| Mixtral-Instruct | 32k | ~4k tokens | Short-form generation |

| DBRX-Instruct | 32k | ~8k tokens | Medium-length tasks |

Context Engineering Strategies

Since we cannot rely on infinite context, we must turn to context engineering. This involves structuring your prompts to maximize the model’s ability to retain and use information. Here are practical steps to improve output quality:

- Prioritize Critical Information: Place the most important instructions or data at the beginning or end of the prompt to avoid the "middle loss" effect.

- Summarize Before Injecting: If you have a 50-page report, summarize it into key bullet points first. Feed the summary, not the raw text, unless the model needs to quote directly.

- Chunk Strategically: Break large tasks into smaller sub-tasks. Process each chunk separately and then synthesize the results. This keeps the context window clean and focused.

- Remove Irrelevant Data: Aggressively filter out noise. Every token that doesn’t contribute to the answer is potential distraction.

- Test Effective Length: Don’t assume the max context is safe. Test your specific task with varying context sizes (e.g., 2k, 8k, 16k) to find the point where performance dips.

Remember, the goal is not to fill the context window. The goal is to provide the *right* amount of context for the specific task. For simple questions, a few hundred tokens may be enough. For complex coding tasks, you might need several thousand. But rarely do you need millions.

Future Directions in Long-Context Models

The industry is moving toward more nuanced approaches. Future research focuses on improving attention mechanisms to reduce dilution and developing architectures that can handle sparse attention more efficiently. We may see models that dynamically adjust their context usage based on the complexity of the query.

Until then, developers and users must treat context length as an optimization problem, not a feature flag. Understanding the limits of effective context will save time, reduce costs, and produce higher-quality outputs. The era of "more is better" is ending; the era of "precision matters" is here.

What is the ideal context length for most LLM tasks?

For most reasoning and coding tasks, the ideal context length is between 2k and 16k tokens. Beyond this range, many models begin to suffer from attention dilution and performance degradation, even if they technically support longer inputs.

Why does performance drop with longer context?

Performance drops due to attention dilution, where the model's focus is spread too thin across many tokens. Additionally, the "Lost in the Middle" phenomenon causes models to ignore information placed in the center of long inputs.

How does this affect RAG systems?

In RAG systems, adding too many documents to the context can degrade answer quality. It is better to retrieve fewer, highly relevant documents and summarize them rather than injecting large volumes of raw text.

What is effective context length?

Effective context length is the maximum amount of input a model can process before its performance begins to decline. This is often much shorter than the model's advertised maximum context window.

Can I fix poor performance by just using a newer model?

Not necessarily. While newer models may have slightly higher effective context limits, the fundamental issue of attention dilution persists. Proper context engineering is required regardless of the model version.