To get a handle on how this works, we need to look at the three heavy hitters: masked modeling, next-token prediction, and denoising. While they all aim to create a "foundational" understanding, they do it using very different mathematical strategies and architectural setups. Choosing the wrong one for your project is the difference between a chatbot that feels human and a search tool that completely misses the point of your query.

The Logic of Masked Modeling: Filling in the Blanks

Masked Modeling is a pretraining strategy where a model hides random parts of the input and tries to predict the missing pieces using the surrounding context. It was brought to the mainstream in 2018 by Google researchers with the release of BERT. Unlike models that read text in one direction, masked modeling is bidirectional. It looks at both the words to the left and the right of the mask to figure out what fits.

In a typical setup, about 15% of the tokens in a sentence are masked. But it's not as simple as just putting an [X] there. To stop the model from getting lazy, researchers use a mix: 80% of the time the word is actually masked, 10% of the time it's replaced with a random word, and 10% of the time it's left alone. This forces the model to actually understand the language rather than just spotting the [MASK] token.

Because it sees the whole picture, masked modeling is a beast at understanding tasks. For instance, it achieves an 88.5% accuracy on SQuAD 2.0 for question answering. However, there's a catch: it's terrible at generating long-form text. If you ask a BERT-style model to write an essay, it will likely start hallucinating or produce incoherent gibberish after a few dozen tokens. It's a reader, not a writer.

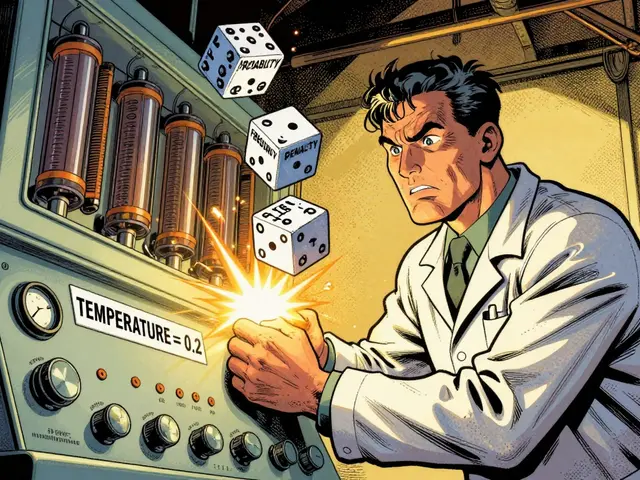

Next-Token Prediction: The Art of the Guess

Next-Token Prediction is an autoregressive approach where a model predicts the very next piece of data based solely on everything that came before it. This is the engine inside the GPT series by OpenAI. While masked modeling looks both ways, this approach is strictly causal-it only looks backward.

This limitation is actually its greatest strength. Because the model is trained to always predict the next word, it becomes a natural generator. When you use a chatbot, you're seeing next-token prediction in real-time. The model predicts one token, adds it to the sequence, and then uses that new sequence to predict the next one. This simplicity is what allowed GPT-3 to scale to 175 billion parameters and achieve a 76.2% accuracy on the SuperGLUE benchmark.

The downside? It can suffer from "error accumulation." If the model makes a slight mistake at token 50, that mistake becomes part of the context for token 51. By the time it hits 500 tokens, the accuracy can drop by as much as 37%, leading to those strange "drift" moments where the AI loses the plot of the conversation.

Denoising: Finding Order in Chaos

Denoising is a process where a model learns to remove Gaussian noise from data to recover a clean original signal. While the first two are mostly for text, denoising is the gold standard for images, powering tools like Stable Diffusion and DALL-E 2.

The process is essentially a countdown. A clean image is progressively corrupted with noise over 1,000 timesteps until it's just a blur of static. The model's job is to learn how to reverse that process-step by step-to pull a clear image out of the noise. This usually happens within a U-Net architecture, which is designed to handle spatial data efficiently.

Denoising models are vastly preferred over older methods like GANs (Generative Adversarial Networks) because they are more stable and produce higher-quality images. Human preference ratings for denoising-based images are around 72.1%, compared to 63.4% for GANs. The tradeoff is the compute cost. Denoising requires significantly more processing power and time, often taking 10 to 100 times more steps than a GAN to generate a single image.

| Feature | Masked Modeling | Next-Token Prediction | Denoising |

|---|---|---|---|

| Primary Use | Understanding/Analysis | Content Generation | Image/Audio Synthesis |

| Context | Bidirectional | Causal (Left-to-Right) | Iterative Refinement |

| Architecture | Transformer Encoder | Transformer Decoder | U-Net / Diffusion |

| Key Metric | GLUE Accuracy (82.2%) | Perplexity / SuperGLUE | FID Score (1.79 on CIFAR-10) |

| Main Weakness | Poor generation | Error accumulation | High compute cost |

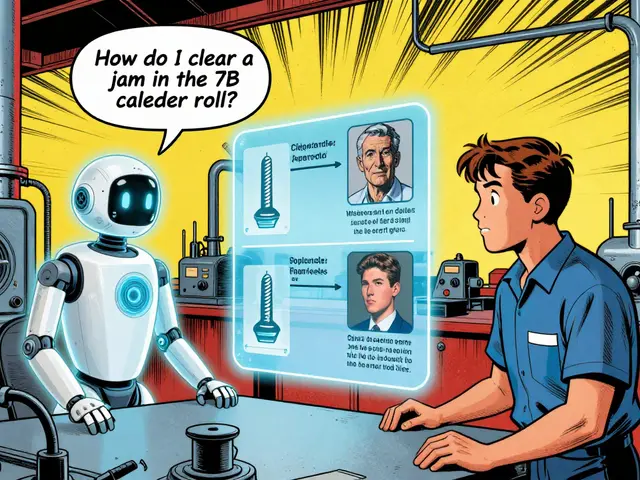

Choosing the Right Objective for Your Project

If you're building a tool for a specific purpose, you can't just pick the "best" objective-you have to pick the one that fits the job. If your goal is named entity recognition or sentiment analysis, masked modeling is your best bet. It provides the bidirectional awareness needed to understand that "Apple" in a sentence refers to the company and not the fruit. In fact, BERT-large hits a 93.2% F1 score in these tasks.

For customer service bots or creative writing tools, next-token prediction is the only real choice. Enterprise users have found that these models actually require 40% less fine-tuning data-averaging 5,000 examples compared to the 8,300 needed for masked models-to get a professional tone. It's just more natural for conversational flow.

Then there's the creative side. If you need high-fidelity visuals, denoising is the way to go. While it's computationally heavy-requiring 24GB of VRAM for a 1024x1024 image-the result is a level of detail that other methods can't touch. Just be aware that if you're trying to generate text inside an image, denoising models still struggle quite a bit.

The Future: Hybrid Approaches and Convergence

We are moving away from the era of "one objective fits all." The newest models are starting to blend these techniques. For example, Google's Gemini 2.0 uses a hybrid of masked and next-token objectives, which pushed its MMLU benchmark score to 90.1%-nearly 6 points higher than models using only next-token prediction. By combining the "understanding" of masked modeling with the "generative power" of next-token prediction, the AI gets the best of both worlds.

We're also seeing efficiency gains. Meta's Llama 3 recently introduced dynamic masking rates that change as the model trains, boosting efficiency by 22%. Meanwhile, Stability AI is working on "flow matching" to reduce the 1,000-step denoising process down to just 4 steps without losing image quality. The goal is clear: make the models smarter, faster, and cheaper to run.

Why can't I use BERT for writing a blog post?

BERT uses masked modeling, which is designed to understand the relationship between words in a sentence by looking at both sides of a mask. It doesn't learn how to predict the next word in a sequence. Because it lacks this causal training, it can't generate coherent, long-form text and will either loop or produce nonsense if forced to write.

Is denoising only for images?

While most famous for images (like Stable Diffusion), denoising is a general principle. It can be used for audio synthesis, removing static from recordings, or even generating new protein structures in biology. Any data that can be represented as a signal that can be corrupted with noise can technically be trained with a denoising objective.

Which objective is the most computationally expensive?

Denoising is generally the most expensive during the inference (generation) phase because it requires multiple iterative steps to refine the image. However, next-token prediction can be incredibly expensive during pretraining due to the sheer size of the models (like GPT-3), which required millions of V100 GPU hours.

What is a "token" in these contexts?

A token is the basic unit of text a model processes. It's not always a whole word; it could be a character, a sub-word (like "ing" at the end of a word), or a punctuation mark. This allows models to handle rare words or different languages more flexibly.

Does the EU AI Act affect these pretraining methods?

Yes. The July 2024 update to the EU AI Act requires companies to document the sources of their pretraining data. Since these objectives rely on massive, unlabeled datasets, this creates a significant compliance hurdle for developers, especially those using next-token prediction for large LLMs.

Next Steps for Implementation

If you're an engineer looking to implement these, start with the Hugging Face Transformers library. It's the industry standard and supports all three objectives with over 10,000 pre-trained models. If you're struggling with fine-tuning instability in a masked model, try adjusting your learning rate or using a smaller masking rate.

For those venturing into denoising, be prepared for a steeper learning curve. You'll need a strong grasp of probability and a significant amount of VRAM. If your inference is too slow, look into ControlNet extensions or flow-matching techniques to reduce the number of steps needed to generate a high-quality image.