You might think that shrinking a massive AI model makes it safer by reducing the "attack surface." It seems logical: fewer parameters mean fewer places for things to go wrong, right? Unfortunately, distilled large language models don't just inherit the intelligence of their teacher models; they inherit their secrets and their flaws too. While the efficiency gains are massive, the security trade-off is a hidden trap that many developers are only now discovering.

If you're deploying a compressed model to an edge device or a private cloud to save on costs, you aren't automatically gaining privacy. In fact, the process of distillation-where a small student model learns to mimic a giant teacher-can actually create new, specialized vulnerabilities that didn't exist in the original version. To get this right, you need to look past the performance benchmarks and focus on how data actually leaks through a compressed architecture.

The Inheritance Problem: Why Small Models Still Leak

At its core, Model Distillation is a compression technique where a smaller student model is trained to replicate the output behavior of a larger teacher model . This means the student model doesn't just learn how to answer questions; it learns the patterns and biases of the teacher. If the teacher model was trained on sensitive data, the student likely absorbed that risk.

Consider the case of DistilGPT-2 is a smaller, faster, cheaper version of GPT-2 developed using knowledge distillation . Research shows it demonstrates almost the same level of personally identifiable information (PII) leakage as the original GPT-2. In security assessments, it successfully reproduced about 63% of the sensitive training data exposures found in the original. This proves that shrinking the model doesn't "scrub" the sensitive data away; it just packs it into a smaller space.

Real-world examples make this even more concrete. A security engineer recently shared a case on Reddit where a distilled variant of Mistral-7B is an open-weight large language model known for high efficiency and performance was used in a healthcare app. Despite being 60% smaller than the original, it still leaked patient identifiers through context window overflows. The smaller size didn't stop the leak; it just changed the way it happened.

New Vulnerabilities Created by Compression

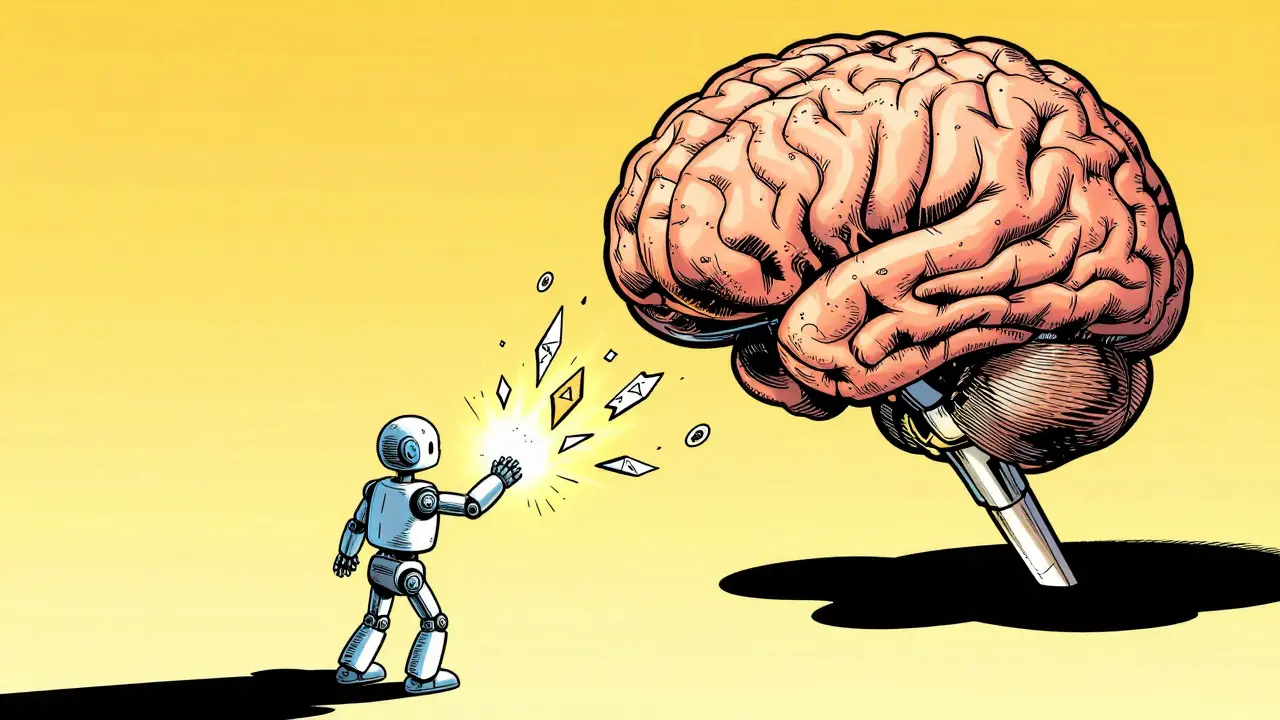

Distillation doesn't just copy old problems; it creates new ones. Because student models are approximations, there are "knowledge gaps"-areas where the student isn't quite as capable as the teacher. Attackers can exploit these gaps to map the model's decision boundaries more easily.

The LUCID framework is a specialized security framework designed to detect capability-specific vulnerabilities in distilled models suggests that these models are actually 2.3 times more likely to show specific vulnerabilities that can be targeted through extraction attacks. When a model is smaller, the "logic" is more compressed, which ironically can make it easier for a sophisticated attacker to reverse-engineer the model's internal boundaries through black-box testing.

This is a critical point for anyone in B2B SaaS. If you distill a proprietary model to run on a client's hardware, you aren't just giving them a tool; you're potentially giving them a map to your intellectual property. The reduced interpretability of these models also makes it harder for your security team to react. Some reports indicate that the mean time to resolve a breach is 37% longer for distilled models because they provide less forensic data than their full-sized counterparts.

Hardware Safeguards and the Performance Tax

Deploying a model locally is often touted as a privacy win, but "local" doesn't mean "invincible." Even on your own hardware, distilled models are vulnerable to memory-snooping and side-channel attacks. This is where hardware-level isolation becomes necessary.

Intel TDX is Intel Trust Domain Extensions, a hardware-based TEE that provides isolated virtual machines for secure computing is the current gold standard for this. It solves a huge problem: the older SGX technology only supported 1GB of memory, which is useless for an LLM. TDX can handle up to 16GB, making it possible to run models like DeepSeek-R1 is a high-performance reasoning model available in various distilled sizes, including a 1.5B parameter version in a secure enclave.

However, security isn't free. Running a model inside a Trusted Execution Environment (TEE) creates a performance overhead. You can expect a 12-18% drop in speed when running distilled models in TDX enclaves. To fight this, many teams are turning to Quantization is the process of reducing the precision of model weights, such as moving from FP16 to 4-bit (Q4) integers . Using 8-bit quantization can narrow that performance gap down to just 5-8%, making the security-performance trade-off much more palatable.

| Feature | Full-Sized Model | Distilled Model (Standard) | Distilled Model (TDX + Quantized) |

|---|---|---|---|

| Attack Surface | Large (Many Parameters) | Small (Fewer Parameters) | Isolated (Hardware Enclave) |

| PII Leakage Risk | High | High (Inherited) | Mitigated (Encrypted Memory) |

| Inference Speed | Baseline | Fast (3x speedup with Q4) | Moderate (5-8% TDX overhead) |

| Forensic Data | Rich | Limited | Very Limited |

Practical Steps for Secure Implementation

If you're moving a distilled model into production, don't just hit "deploy." You need a layered defense. Start by auditing the teacher model. If the teacher is leaky, the student will be too. Use differential privacy during the distillation process to break the direct link between training data and model weights; some recent research shows this can cut extraction success rates by 73%.

Next, implement a strict verification process. If you use a framework like LUCID, be prepared for the time commitment. You'll need to build observation datasets with 15,000 to 20,000 curated prompts per capability, which can take 3 to 5 weeks of engineering effort per model. It's a slog, but it's the only way to know if your model will cough up a password or a proprietary secret under pressure.

Finally, keep an eye on the regulatory landscape. The EU AI Act's July 2025 update specifically targets this. If you're deploying commercially, you must provide "demonstrable safeguards" against knowledge extraction. "We used a smaller model" is no longer an acceptable answer for regulators.

Does using a smaller distilled model automatically improve privacy?

No. While a smaller model has fewer parameters, it often inherits the same training data vulnerabilities as the larger teacher model. In some cases, the compressed nature of the model can even make it easier for attackers to map decision boundaries and extract information.

What is the main hardware solution for securing distilled LLMs?

Intel TDX (Trust Domain Extensions) is currently a leading solution. It allows distilled models to run in isolated virtual machines (enclaves) with up to 16GB of memory, protecting the model from memory-snooping and other side-channel attacks that occur even on local hardware.

How does quantization affect the security of a distilled model?

Quantization primarily affects performance and memory usage. While it doesn't directly "fix" privacy leaks, it helps mitigate the performance overhead caused by security layers like TDX. For example, using 8-bit quantization can reduce the TDX performance penalty from 18% down to about 5-8%.

What are knowledge extraction attacks?

These are targeted adversarial prompts designed to trick a model into revealing its training data, proprietary logic, or the specific capabilities it was distilled to have. Distilled models are often more susceptible to these because of the "knowledge gaps" between the student and teacher models.

Is there a way to reduce the risk of PII leakage in distilled models?

Yes, using differential privacy during the distillation phase can significantly reduce the success rate of extraction attacks. Additionally, deploying the model within a TEE (like Intel TDX) and implementing rigorous capability-testing via frameworks like LUCID can help identify and block leakage points.

Next Steps and Troubleshooting

For DevOps Engineers: If you're seeing an unacceptable performance hit after enabling TDX, check your quantization levels. Moving from FP16 to 8-bit or 4-bit (Q4) often recovers most of the lost speed. Ensure your TEE configuration is updated to the latest version (v2.3 or later) to avoid legacy memory bottlenecks.

For Security Auditors: Stop treating distilled models as "black boxes." Use a targeted observation dataset. If you don't have the resources for 20,000 prompts, focus your testing on the 5-10 most critical capabilities (e.g., PII handling, API key generation) to find the most glaring holes first.

For Product Managers: Be aware that the time-to-resolution for a security incident involving a distilled model is generally longer. Build more robust logging around the model's inputs and outputs to compensate for the lack of internal interpretability compared to full-sized models.

6 Comments

rahul shrimali

Super useful breakdown of the trade-offs

Bharat Patel

It is fascinating how we try to shrink the mind of the machine to fit our pockets while expecting it to retain the wisdom of a giant. Perhaps the vulnerability isn't just in the model but in our desire for efficiency over absolute truth and security. It makes one wonder if there is a fundamental limit to how much we can compress knowledge before the essence of the original intent is lost or distorted into something dangerous

Eka Prabha

The systemic obfuscation of the knowledge extraction vectors in these distilled architectures is clearly a calculated move by the corporate hegemony to maintain algorithmic dominance while pretending to provide "open-weight" transparency. This is just more digital panopticon behavior where the TEEs are not for our protection but for ensuring the proprietary weights remain locked away from any real democratic audit. The EU AI Act is merely a performative gesture to appease the masses while the actual data-harvesting pipelines continue to operate in the shadows of these所谓的"secure enclaves" which are likely riddled with undocumented backdoors for state-level actors

Reshma Jose

totally agree on the TEE part. most people just assume local equals private but the side-channel attacks are a real nightmare for anyone actually doing this in production. we really need to push for better open-source auditing tools because waiting 5 weeks for a LUCID run is just not realistic for a fast-moving startup. we gotta find a way to automate the vulnerability mapping or we're just kidding ourselves

Bhavishya Kumar

The lack of attention to grammatical precision in the broader discourse regarding model distillation is lamentable. However the technical assertion regarding Intel TDX is correct. One must ensure that the memory allocation is strictly monitored to prevent leakage

Bhagyashri Zokarkar

omg i literally cannot even imagine how stressed the devs must be when they realize their little optimized model is just a giant leaking sieve of patient data and they have to tell the bosses that the a-i is basically gossiping about everyone in the hospital and then they have to deal with the legal nightmare and the sheer panic of a data breach that takes like forever to fix because the forensic data is basically non-existent so they are just staring at a black box while the company stock plummets and everyone is screaming and they probably didnt even get paid enough for this kind of stress lol