When a chemist spends 40 hours designing a single experiment, only to find out later that a solvent choice is fundamentally flawed, it’s not just wasted time-it’s lost momentum. That’s the reality many researchers face. But what if an AI could scan thousands of papers in minutes, spot patterns no human would notice, and suggest a better path-then double-check itself before you even touch the lab bench? That’s not science fiction. It’s happening now, with Scientific Large Language Models (Sci-LLMs) reshaping how science gets done.

What Exactly Are Sci-LLMs?

Sci-LLMs aren’t just GPT-4 with a lab coat. They’re specialized AI systems trained on scientific data: journal articles, chemical structures, genomic sequences, lab protocols, and even images of microscopy slides. Unlike general-purpose models, they understand SMILES notation for molecules, DNA base pairs, and the structure of scientific tables. Models like KG-CoI and Google’s CURIE framework use graph neural networks to connect molecular structures to biological effects, and vision encoders to interpret figures from papers. They’re built to reason across disciplines-linking a drug’s chemical structure to clinical trial outcomes, for example, something most researchers would never connect manually.How Sci-LLMs Actually Speed Up Research

Most scientists spend 20-30% of their time just reading papers. Sci-LLMs cut that down. A 2023 study found they reduce literature review time by 63%. One researcher at Stanford used a Sci-LLM to summarize 1,200 papers on neurodegenerative diseases in under an hour. The model didn’t just list papers-it grouped them by mechanism, flagged contradictory findings, and highlighted understudied pathways. That’s what saved her three weeks. But it’s not just about reading. Experimental design is where the real time savings kick in. Traditionally, designing a single experiment could take 40-60 hours. With Sci-LLMs, that drops to 4-8. The system breaks down a request like “run a Suzuki coupling with these substrates” into steps: reagent selection, temperature control, solvent compatibility, purification method. It cross-checks each step against known protocols and warns about pitfalls. One team at MIT automated 87% of their routine synthesis workflows this way.

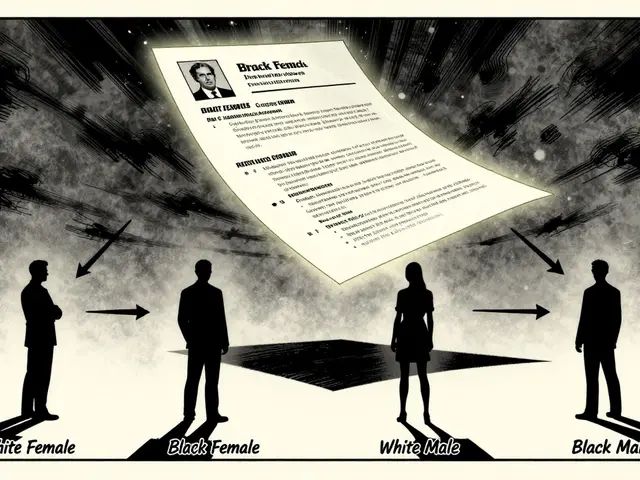

The Hidden Costs: Errors and Hallucinations

Here’s the catch: Sci-LLMs aren’t perfect. They make mistakes-sometimes dangerous ones. A 2025 analysis of 200 chemistry workflows found a 23.8% error rate in protocol generation. One user on Reddit shared how a model suggested using acetone in a Grignard reaction. Acetone reacts violently with Grignard reagents. The mistake cost two days of lab time and ruined samples. That’s not an outlier. Hallucinations-where the model confidently invents facts-are still a problem, especially in novel scenarios. In one case, a Sci-LLM proposed a new drug candidate based on a paper that didn’t exist. It cited a DOI that was fake. The error rate jumps to 37.9% when the experiment is truly new-not something seen in training data. That’s why most labs still require human verification. Pfizer’s Groton lab found that while Sci-LLMs improved documentation efficiency by 35%, technicians had to spend 2.3 hours per day checking every generated protocol for accuracy. The models are great at synthesis, terrible at novelty.Who’s Using Sci-LLMs-and Where?

Adoption is uneven. Pharmaceutical companies are leading the charge. Gartner reports 42.7% of major drug firms now use Sci-LLMs in early-stage discovery, up from 18.2% in 2024. The reason? Time and money. A single failed drug trial can cost over $1 billion. Reducing early-stage errors by even 10% saves millions. Materials science is next. Researchers use Sci-LLMs to predict new battery materials by connecting crystal structure data with performance metrics across hundreds of papers. But clinical trial design? That’s a different story. Only 15% of institutions use them there. The FDA is watching closely. In September 2025, they released draft guidelines requiring human verification for all AI-generated trial protocols. The message is clear: automation is welcome, but accountability isn’t optional.

What You Need to Get Started

If you’re a researcher thinking about using Sci-LLMs, don’t jump in headfirst. Start small. Use them for literature review first. Tools like the CURIE framework or open-source models like SciNLP can scan your topic and pull relevant papers you might have missed. Once you’re comfortable, try experimental design. But always validate. Never trust a protocol without checking it against trusted sources. You’ll also need skills. Basic Python for API calls, understanding of transformer models, and-most importantly-deep domain knowledge. Researchers without scientific expertise make 3.7 times more errors when using these tools. The AI doesn’t replace your judgment; it amplifies it. If you don’t know what’s plausible, you won’t catch the lies.The Future: Autonomous Labs and Regulatory Walls

The next big leap is autonomous labs. Google’s roadmap targets 60% autonomous operation in controlled environments by 2028. Imagine a robot arm following an AI-generated protocol, running reactions, analyzing results, and adjusting the next experiment-all without human input. It’s being tested now in a few university labs. But there’s a dark side. If flawed AI-generated papers get published, retractions could spike by 15-20% by 2030. Journals are already struggling to detect AI-written methods sections. And who owns a hypothesis generated by an AI? That’s a legal gray zone no one has solved yet. Still, the trend is clear. By 2030, 85% of scientific research will involve LLMs in some way-not because they’re perfect, but because they’re too useful to ignore. The winners won’t be the ones who use AI the most. They’ll be the ones who use it the smartest.Can Sci-LLMs replace human researchers?

No. Sci-LLMs are tools, not replacements. They excel at processing large volumes of data, spotting patterns, and automating routine tasks-but they can’t replicate human intuition, creativity, or ethical judgment. A model might suggest a promising drug candidate, but only a researcher can decide if it’s worth pursuing based on biological plausibility, funding constraints, or clinical relevance. The best outcomes happen when humans guide the AI, not the other way around.

Are Sci-LLMs only useful in chemistry and biology?

No. While chemistry and biology lead adoption due to structured data availability, Sci-LLMs are being used in physics, materials science, geology, and even astronomy. In physics, they help interpret particle collision data by cross-referencing theoretical models. In astronomy, they analyze telescope images to identify unusual celestial patterns. The key factor isn’t the field-it’s whether the data is digitized and structured enough for the model to learn from.

How accurate are Sci-LLMs in generating scientific citations?

Citation accuracy is a major weakness. Studies show that 37.2% of issues reported in open-source Sci-LLM projects relate to incorrect or fabricated citations. Models often mix real papers with fake ones, or misattribute authors and journals. Even leading frameworks like CURIE occasionally generate plausible-looking but non-existent references. Always verify every citation manually. Use tools like PubMed or CrossRef to check DOIs independently.

Do I need expensive hardware to use Sci-LLMs?

Not necessarily. Training a full Sci-LLM requires dozens of high-end GPUs, but using one doesn’t. Most researchers access pre-trained models via cloud APIs (like Google’s Vertex AI or Hugging Face) or through institutional subscriptions. A typical query takes 2-8 seconds and costs less than $0.10. You only need a laptop and internet connection to start experimenting.

What’s the biggest mistake researchers make when using Sci-LLMs?

The biggest mistake is treating them like a search engine. Sci-LLMs don’t retrieve facts-they generate responses based on patterns. If you ask for a protocol without context, you’ll get a generic one that might be wrong. The best users treat them as collaborators: they provide context, ask follow-ups, and challenge every output. They don’t accept answers-they interrogate them.

Will Sci-LLMs make scientific publishing less reliable?

They already are, if unchecked. A Nature editorial in December 2025 warned that AI-generated papers with fabricated methods or misleading data could increase retractions by 15-20% in the next five years. Journals are scrambling to implement AI detection tools, but the real solution is human oversight. Peer reviewers now routinely ask: “Was this section assisted by AI?” And if yes, they demand full disclosure and verification logs. Transparency is becoming mandatory.

9 Comments

deepak srinivasa

Been using Sci-LLMs for my organic synthesis work for about a year now. The literature review speed is insane - I used to spend weekends just reading papers. Now I get a curated summary in 10 minutes. But yeah, the hallucinations? Real. Once it told me a reaction worked at -78°C with DMF as solvent. DMF decomposes at -40°C. Almost ruined my whole batch. Always cross-check with Reaxys. Never trust the AI’s citation.

pk Pk

As someone who mentors junior researchers, I can’t stress this enough: AI doesn’t replace your brain, it just gives it a turbo boost. The real skill isn’t using the tool - it’s knowing when to say ‘no’ to the AI. I’ve had students blindly follow its protocols and waste months. Teach them to interrogate every output. Ask: ‘Why this solvent? Why this temperature? What’s the fallback if it fails?’ That’s real science.

NIKHIL TRIPATHI

Love how this post broke down the real numbers - 23.8% error rate, 37.9% in novel scenarios. That’s not negligible. I work in materials science, and we’ve had cases where the model suggested a perovskite composition that had never been synthesized. Turns out, the paper it cited was from a predatory journal. We caught it because we had a grad student who’d read that journal before. Domain knowledge saved us. AI is a co-pilot, not the pilot.

Also, the citation issue is wild. I ran a test: asked for 20 references on lithium-sulfur batteries. 7 were fake. DOIs were valid-looking but led to 404s. We’re going to need blockchain-style citation verification soon. Or at least a bot that checks CrossRef in real time.

Shivani Vaidya

The most dangerous assumption is that AI understands context. It doesn't. It predicts. And prediction without wisdom is a recipe for disaster.

Rubina Jadhav

I’m a lab tech. I don’t write papers. But I run the experiments. And I’ve seen too many protocols come out of these models that just… make no sense. Like suggesting a reflux at 200°C with ether. Ether boils at 35. I just say no. No one else checks. I do. Someone has to.

sumraa hussain

OMG. I just had a dream last night where an AI wrote my entire thesis. It was beautiful. Perfect citations. Flawless methodology. Then I woke up. And remembered… it was all fake. I cried. Not because I lost sleep. Because I realized I’d started trusting it more than my own instincts. Is that sad? Or just the future?

Raji viji

Let’s be real. Half these ‘Sci-LLMs’ are just GPT-4 dressed up in a lab coat and given a DOI generator. I’ve seen papers where the ‘novel compound’ they proposed had a molecular weight of 1200 g/mol and 17 chiral centers. In a one-pot reaction. With a 3-hour workup. Who even *is* this person? And why are journals letting this crap through? It’s not innovation - it’s academic clownery. And we’re all just pretending it’s science.

Rajashree Iyer

There is a quiet violence in outsourcing thought. When we let machines synthesize our hypotheses, we are not accelerating discovery - we are outsourcing our curiosity. The AI does not wonder. It calculates. And wonder - that fragile, irrational, human spark - is the only thing that has ever led us beyond the edge of known data. What are we becoming when we ask a machine to dream for us?

Parth Haz

Agreed with the points on human oversight. At our institute, we’ve implemented a mandatory ‘AI Protocol Review Checklist’ - signed by both the researcher and their PI. It includes: 1) Cross-verify all solvents with safety databases, 2) Validate every citation via CrossRef, 3) Confirm all conditions against at least two peer-reviewed protocols. It adds 20 minutes per experiment. But it prevents disasters. And yes - we’ve caught 12 dangerous suggestions so far. The AI isn’t the enemy. Complacency is.