Tag: model monitoring

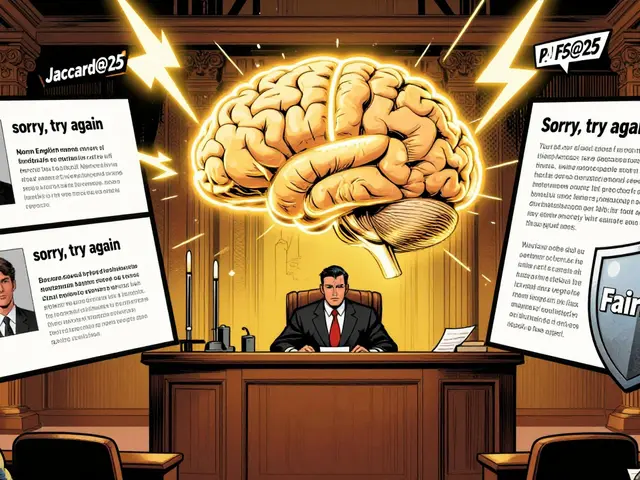

Beyond BLEU and ROUGE: Semantic Metrics for LLM Output Quality

Tamara Weed, Mar, 28 2026

Traditional metrics like BLEU fail to capture LLM meaning. Learn why semantic metrics like BERTScore and LLM-as-a-Judge provide accurate quality assessment for modern AI deployments.

Categories:

Tags: