Tag: AI code vulnerabilities

Tamara Weed, Mar, 18 2026

Databricks AI red team uncovered critical vulnerabilities in AI-generated game and parser code, revealing how prompt injection and data leakage can bypass traditional security tools. Learn how to protect your systems.

Categories:

Tags:

Tamara Weed, Nov, 13 2025

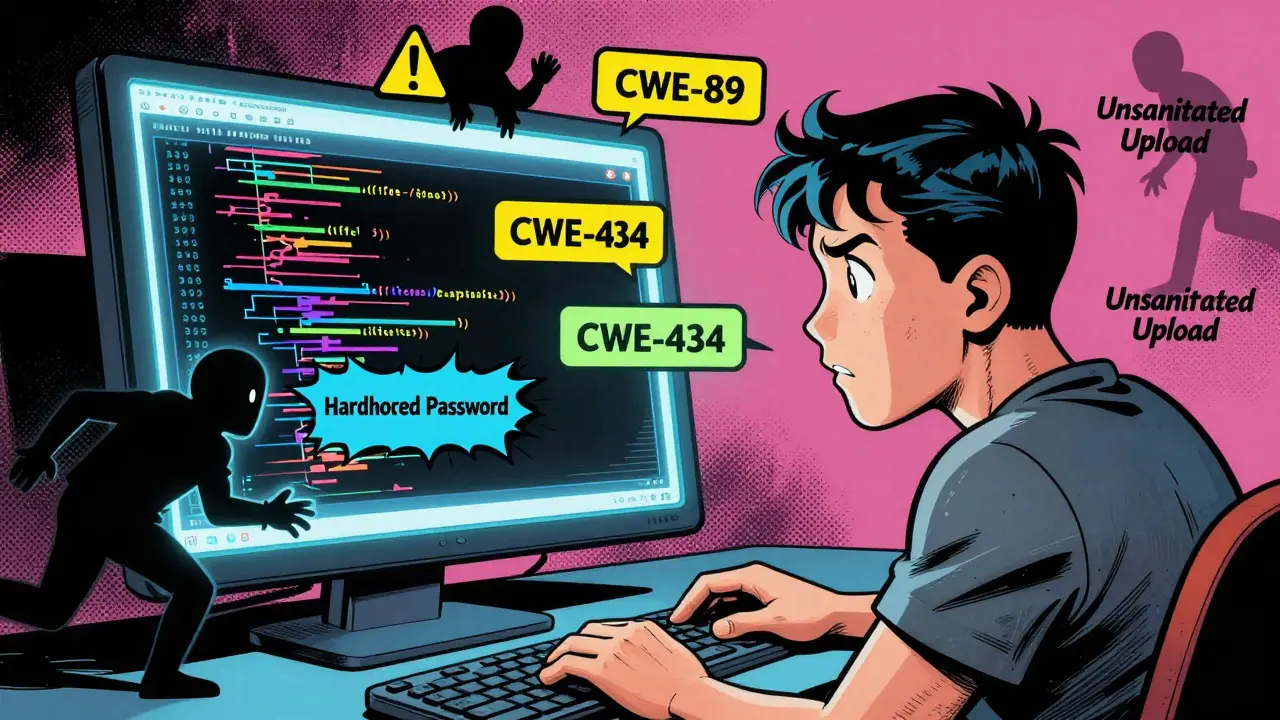

Anti-pattern prompts in vibe coding lead to insecure AI-generated code. Learn the most dangerous types of prompts, why they fail, and how to write secure, specific instructions that prevent vulnerabilities before they happen.

Categories:

Tags: